Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

1 year ago | |

|---|---|---|

| basicsr | 1 year ago | |

| demo | 2 years ago | |

| experiments/pretrained_models | 1 year ago | |

| figures | 1 year ago | |

| options | 1 year ago | |

| scripts/data_preparation | 2 years ago | |

| LICENSE | 2 years ago | |

| README.md | 1 year ago | |

| VERSION | 2 years ago | |

| requirements.txt | 1 year ago | |

| setup.cfg | 2 years ago | |

| setup.py | 2 years ago | |

README.md

Improving Image Restoration by Revisiting Global Information Aggregation

By Xiaojie Chu, Liangyu Chen, Chengpeng Chen, Xin Lu

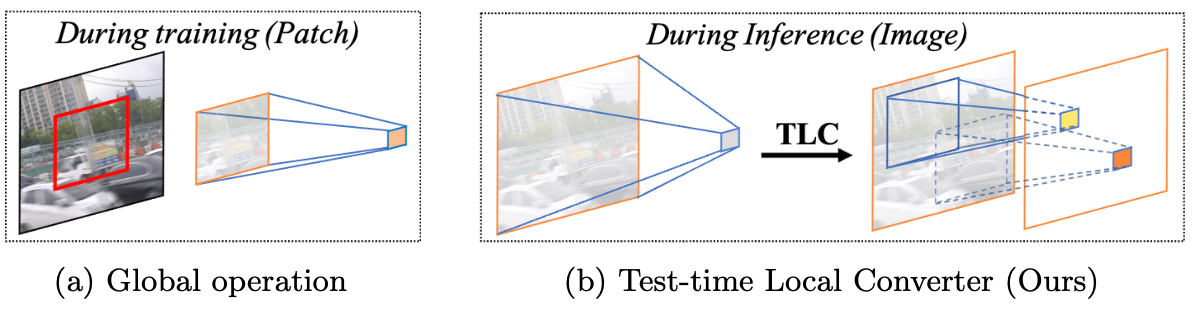

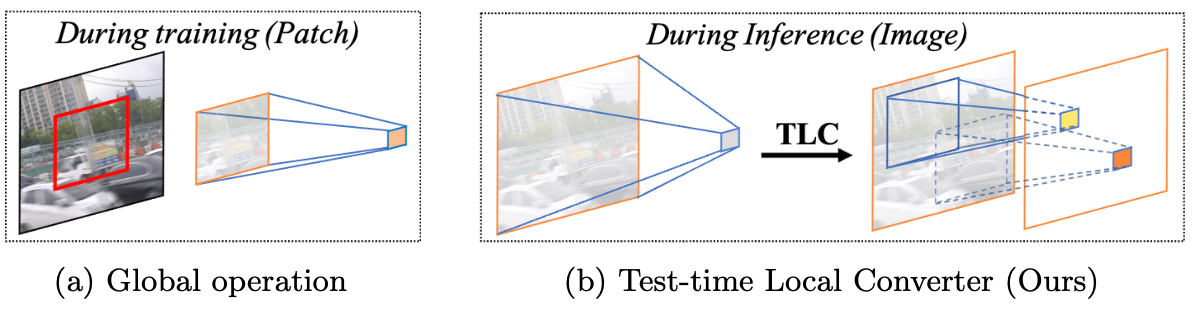

This repository is an official implementation of the Improving Image Restoration by Revisiting Global Information Aggregation (ECCV 2022). We propose Test-time Local Converter (TLC), which convert the global operation to a local one so that it extract representations based on local spatial region of features as in training phase. Our approach has no requirement of retraining or finetuning, and only induces marginal extra costs.

Abstract

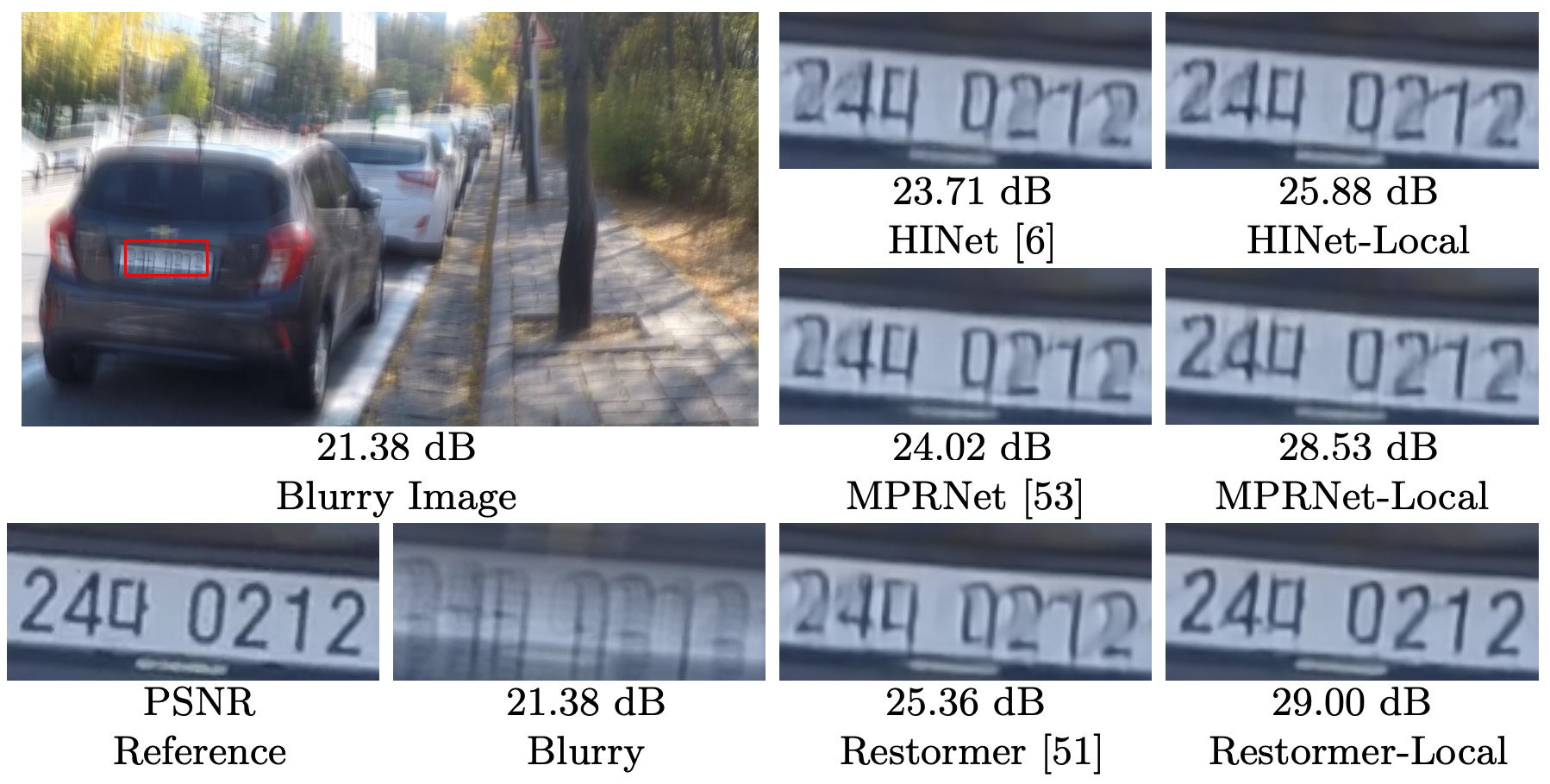

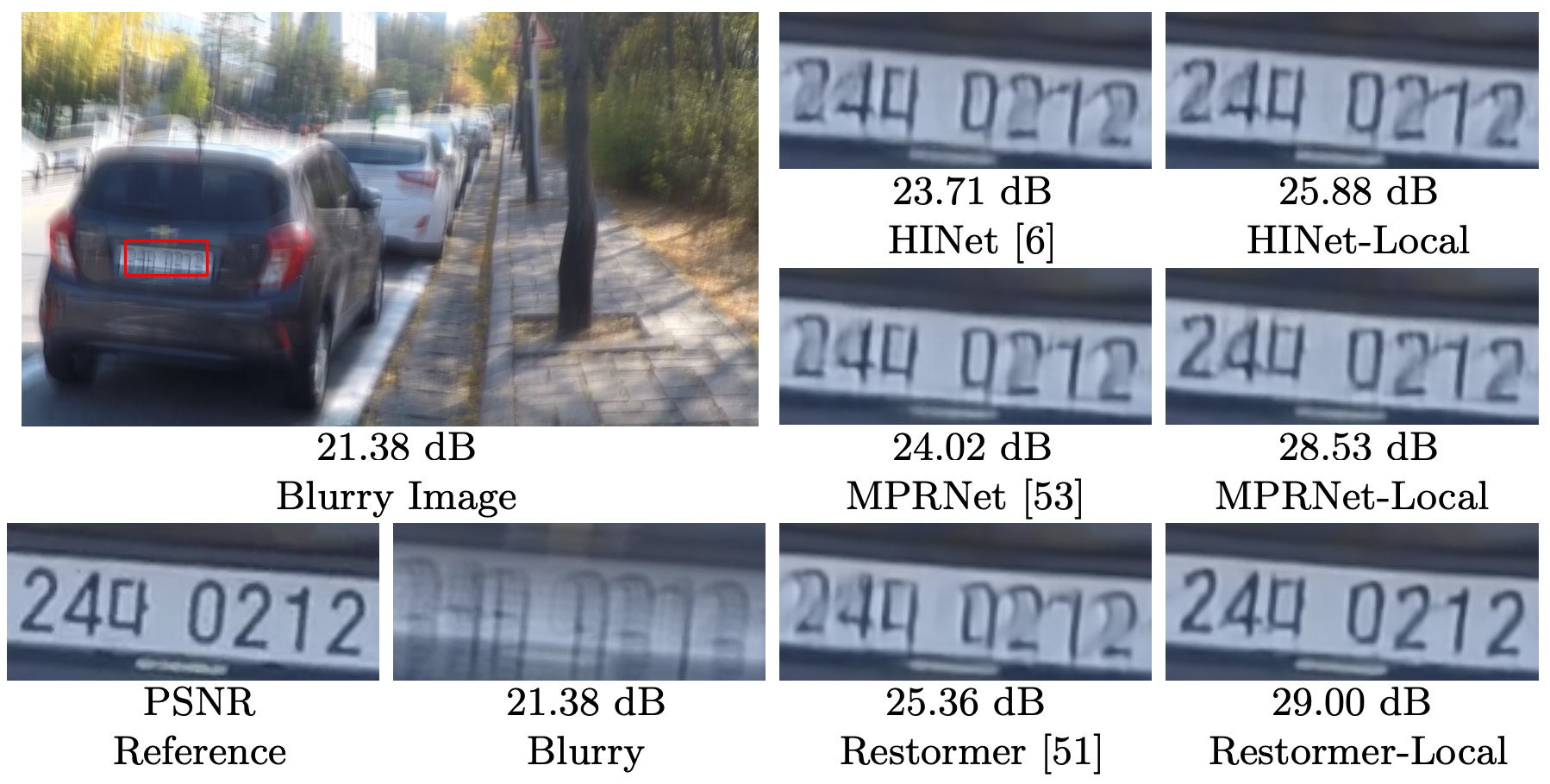

Global operations, such as global average pooling, are widely used in top-performance image restorers. They aggregate global information from input features along entire spatial dimensions but behave differently during training and inference in image restoration tasks: they are based on different regions, namely the cropped patches (from images) and the full-resolution images. This paper revisits global information aggregation and finds that the image-based features during inference have a different distribution than the patch-based features during training. This train-test inconsistency negatively impacts the performance of models, which is severely overlooked by previous works. To reduce the inconsistency and improve test-time performance, we propose a simple method called Test-time Local Converter (TLC). Our TLC converts global operations to local ones only during inference so that they aggregate features within local spatial regions rather than the entire large images. The proposed method can be applied to various global modules (e.g., normalization, channel and spatial attention) with negligible costs. Without the need for any fine-tuning, TLC improves state-of-the-art results on several image restoration tasks, including single-image motion deblurring, video deblurring, defocus deblurring, and image denoising. In particular, with TLC, our Restormer-Local improves the state-of-the-art result in single image deblurring from 32.92 dB to 33.57 dB on GoPro dataset.

Main Results

Models with our TLC are denoted with -Local suffix.

| Method | GoPro | HIDE |

|---|---|---|

| HINet | 32.71 | 30.33 |

| HINet-Local (ours) | 33.08 (+0.37) | 30.66 (+0.33) |

| MPRNet | 32.66 | 30.96 |

| MPRNet-Local (ours) | 33.31 (+0.65) | 31.19 (+0.23) |

| Restormer | 32.92 | 31.22 |

| Restormer-Local (ours) | 33.57 (+0.65) | 31.49 (+0.27) |

Usage

Installation

This implementation based on BasicSR which is a open source toolbox for image/video restoration tasks.

git clone https://github.com/megvii-research/TLC.git

cd TLC

pip install -r requirements.txt

python setup.py develop

Quick Start (Single Image Inference)

-

python basicsr/demo.py -opt options/demo/demo.yml- modified your input and output path

- define network

- pretrained model, it should match the define network.

- for pretrained model, see here

Evaluation

Image Deblur - GoPro dataset (Click to expand)

-

prepare data

-

eval

-

download pretrained HINet to ./experiments/pretrained_models/HINet-GoPro.pth

-

python basicsr/test.py -opt options/test/GoPro/MPRNetLocal-GoPro.yml -

download pretrained MPRNet to ./experiments/pretrained_models/MPRNet-GoPro.pth

-

python basicsr/test.py -opt options/test/GoPro/MPRNetLocal-GoPro.yml -

download pretrained Restormer to ./experiments/pretrained_models/Restormer-GoPro.pth

-

python basicsr/test.py -opt options/test/GoPro/MPRNetLocal-GoPro.yml

-

Image Deblur - HIDE dataset (Click to expand)

-

prepare data

-

eval

-

download pretrained HINet to ./experiments/pretrained_models/HINet-GoPro.pth

-

python basicsr/test.py -opt options/test/HIDE/MPRNetLocal-HIDE.yml -

download pretrained MPRNet to ./experiments/pretrained_models/MPRNet-GoPro.pth

-

python basicsr/test.py -opt options/test/HIDE/MPRNetLocal-HIDE.yml -

download pretrained Restormer to ./experiments/pretrained_models/Restormer-GoPro.pth

-

python basicsr/test.py -opt options/test/HIDE/MPRNetLocal-HIDE.yml

-

Tricks: Change the 'fast_imp: false' (naive implementation) to 'fast_imp: true' (faster implementation) in MPRNetLocal config can achieve faster inference speed.

News

Our work has been applied to the following:

2022.06.19 NAFSSR: Stereo Image Super-Resolution Using NAFNet won the 1st place on the NTIRE 2022 Stereo Image Super-resolution Challenge! It is selected for an ORAL presentation at CVPR 2022, NTIRE workshop 🎉 Presentation video, slides and poster are available now. [Code]

2022.04.12 Simple Baselines for Image Restoration (ECCV 2022) reveals the nonlinear activation functions, e.g. ReLU, GELU, Sigmoid, and etc. are not necessary to achieve SOTA performance. The paper provide a simple baseline, NAFNet: Nonlinear Activation Free Network for Image Restoration tasks, and acheves SOTA performance on Image Denoising and Image Deblurring. [Code]

License

This project is under the MIT license, and it is based on BasicSR which is under the Apache 2.0 license.

Citations

If TLC helps your research or work, please consider citing TLC.

@article{chu2021tlc,

title={Improving Image Restoration by Revisiting Global Information Aggregation},

author={Chu, Xiaojie and Chen, Liangyu and and Chen, Chengpeng and Lu, Xin},

journal={arXiv preprint arXiv:2112.04491},

year={2021}

}

Contact

If you have any questions, please contact chuxiaojie@megvii.com or chenliangyu@megvii.com.

Improving Image Restoration by Revisiting Global Information Aggregation

By Xiaojie Chu, Liangyu Chen, Chengpeng Chen, Xin Lu

This repository is an official implementation of the Improving Image Restoration by Revisiting Global Information Aggregation (ECCV 2022). We propose Test-time Local Converter (TLC), which convert the global operation to a local one so that it extract representations based on local spatial region of features as in training phase. Our approach has no requirement of retraining or finetuning, and only induces marginal extra costs.

Abstract

Global operations, such as global average pooling, are widely used in top-performance image restorers. They aggregate global information from input features along entire spatial dimensions but behave differently during training and inference in image restoration tasks: they are based on different regions, namely the cropped patches (from images) and the full-resolution images. This paper revisits global information aggregation and finds that the image-based features during inference have a different distribution than the patch-based features during training. This train-test inconsistency negatively impacts the performance of models, which is severely overlooked by previous works. To reduce the inconsistency and improve test-time performance, we propose a simple method called Test-time Local Converter (TLC). Our TLC converts global operations to local ones only during inference so that they aggregate features within local spatial regions rather than the entire large images. The proposed method can be applied to various global modules (e.g., normalization, channel and spatial attention) with negligible costs. Without the need for any fine-tuning, TLC improves state-of-the-art results on several image restoration tasks, including single-image motion deblurring, video deblurring, defocus deblurring, and image denoising. In particular, with TLC, our Restormer-Local improves the state-of-the-art result in single image deblurring from 32.92 dB to 33.57 dB on GoPro dataset.

Main Results

Models with our TLC are denoted with -Local suffix.

| Method | GoPro | HIDE |

|---|---|---|

| HINet | 32.71 | 30.33 |

| HINet-Local (ours) | 33.08 (+0.37) | 30.66 (+0.33) |

| MPRNet | 32.66 | 30.96 |

| MPRNet-Local (ours) | 33.31 (+0.65) | 31.19 (+0.23) |

| Restormer | 32.92 | 31.22 |

| Restormer-Local (ours) | 33.57 (+0.65) | 31.49 (+0.27) |

Usage

Installation

This implementation based on BasicSR which is a open source toolbox for image/video restoration tasks.

git clone https://github.com/megvii-research/TLC.git

cd TLC

pip install -r requirements.txt

python setup.py develop

Quick Start (Single Image Inference)

-

python basicsr/demo.py -opt options/demo/demo.yml- modified your input and output path

- define network

- pretrained model, it should match the define network.

- for pretrained model, see here

Evaluation

Image Deblur - GoPro dataset (Click to expand)

-

prepare data

-

eval

-

download pretrained HINet to ./experiments/pretrained_models/HINet-GoPro.pth

-

python basicsr/test.py -opt options/test/GoPro/MPRNetLocal-GoPro.yml -

download pretrained MPRNet to ./experiments/pretrained_models/MPRNet-GoPro.pth

-

python basicsr/test.py -opt options/test/GoPro/MPRNetLocal-GoPro.yml -

download pretrained Restormer to ./experiments/pretrained_models/Restormer-GoPro.pth

-

python basicsr/test.py -opt options/test/GoPro/MPRNetLocal-GoPro.yml

-

Image Deblur - HIDE dataset (Click to expand)

-

prepare data

-

eval

-

download pretrained HINet to ./experiments/pretrained_models/HINet-GoPro.pth

-

python basicsr/test.py -opt options/test/HIDE/MPRNetLocal-HIDE.yml -

download pretrained MPRNet to ./experiments/pretrained_models/MPRNet-GoPro.pth

-

python basicsr/test.py -opt options/test/HIDE/MPRNetLocal-HIDE.yml -

download pretrained Restormer to ./experiments/pretrained_models/Restormer-GoPro.pth

-

python basicsr/test.py -opt options/test/HIDE/MPRNetLocal-HIDE.yml

-

Tricks: Change the 'fast_imp: false' (naive implementation) to 'fast_imp: true' (faster implementation) in MPRNetLocal config can achieve faster inference speed.

News

Our work has been applied to the following:

2022.06.19 NAFSSR: Stereo Image Super-Resolution Using NAFNet won the 1st place on the NTIRE 2022 Stereo Image Super-resolution Challenge! It is selected for an ORAL presentation at CVPR 2022, NTIRE workshop 🎉 Presentation video, slides and poster are available now. [Code]

2022.04.12 Simple Baselines for Image Restoration (ECCV 2022) reveals the nonlinear activation functions, e.g. ReLU, GELU, Sigmoid, and etc. are not necessary to achieve SOTA performance. The paper provide a simple baseline, NAFNet: Nonlinear Activation Free Network for Image Restoration tasks, and acheves SOTA performance on Image Denoising and Image Deblurring. [Code]

License

This project is under the MIT license, and it is based on BasicSR which is under the Apache 2.0 license.

Citations

If TLC helps your research or work, please consider citing TLC.

@article{chu2021tlc,

title={Improving Image Restoration by Revisiting Global Information Aggregation},

author={Chu, Xiaojie and Chen, Liangyu and and Chen, Chengpeng and Lu, Xin},

journal={arXiv preprint arXiv:2112.04491},

year={2021}

}

Contact

If you have any questions, please contact chuxiaojie@megvii.com or chenliangyu@megvii.com.

No Description

Text Python other