Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

1 year ago | |

|---|---|---|

| .. | ||

| app | 1 year ago | |

| classificationlibrary | 1 year ago | |

| enginelibrary | 1 year ago | |

| facelibrary | 1 year ago | |

| gdxlibrary | 1 year ago | |

| gradle/wrapper | 1 year ago | |

| images | 2 years ago | |

| landmarklibrary | 1 year ago | |

| utilslibrary | 1 year ago | |

| .gitignore | 1 year ago | |

| README.md | 2 years ago | |

| build.gradle | 1 year ago | |

| gradle.properties | 1 year ago | |

| gradlew | 1 year ago | |

| gradlew.bat | 2 years ago | |

| settings.gradle | 1 year ago | |

README.md

MindSpore Vision 端侧图像分类demo(Android)

本示例程序演示了如何在端侧利用MindSpore Lite C++ API(Android JNI)以及MindSpore Lite 图像分类模型完成端侧推理,实现对设备摄像头捕获的内容进行分类,并在App图像预览界面中显示出最可能的分类结果。

运行依赖

- Android Studio >= 3.2 (推荐4.0以上版本)

构建与运行

-

在Android Studio中加载本示例源码。

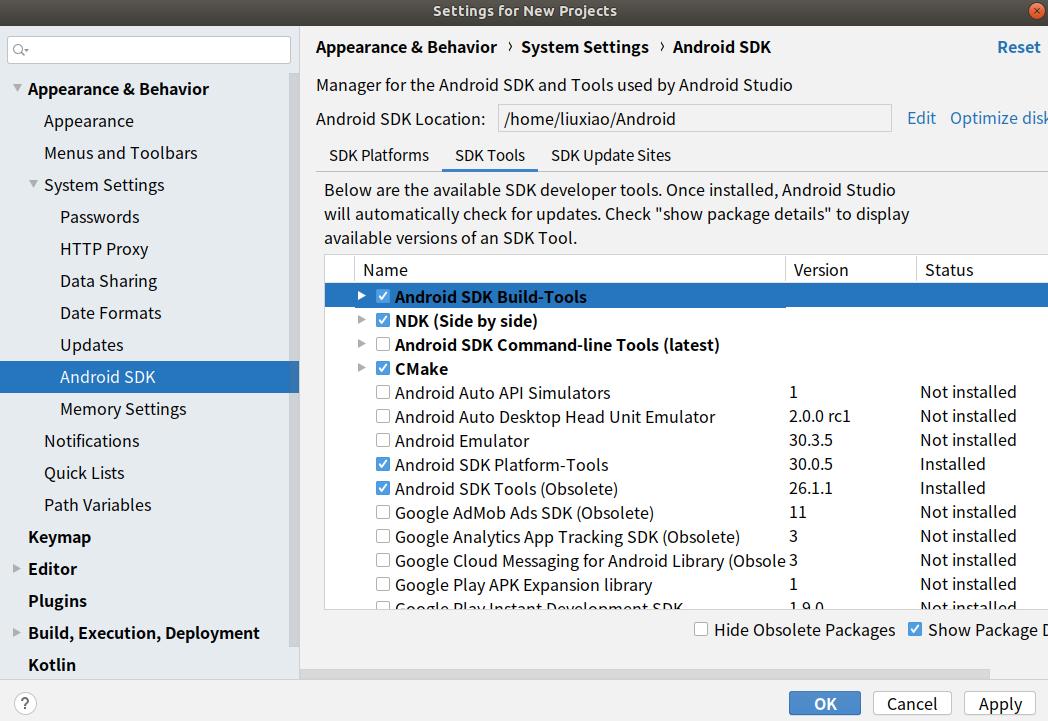

启动Android Studio后,点击

File->Settings->System Settings->Android SDK,勾选相应的SDK Tools。如下图所示,勾选后,点击OK,Android Studio即可自动安装SDK。Android SDK Tools为默认安装项,取消

Hide Obsolete Packages选框之后可看到。使用过程中若出现问题,可参考第4项解决。

-

连接Android设备,运行该应用程序。

通过USB连接Android手机。待成功识别到设备后,点击

Run 'app'即可在您的手机上运行本示例项目。编译过程中Android Studio会自动下载MindSpore Lite、模型文件等相关依赖项,编译过程需做耐心等待。

Android Studio连接设备调试操作,可参考https://developer.android.com/studio/run/device?hl=zh-cn。

手机需开启“USB调试模式”,Android Studio 才能识别到手机。 华为手机一般在设置->系统和更新->开发人员选项->USB调试中开始“USB调试模型”。

-

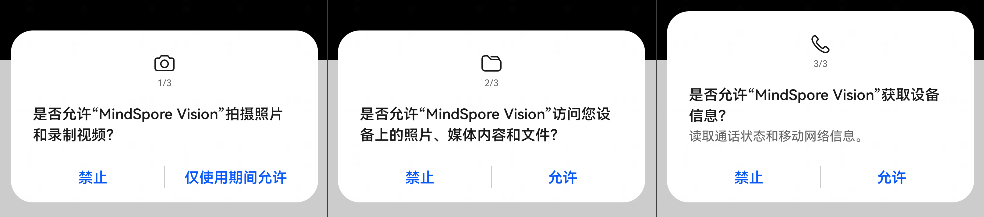

在Android设备上,点击“允许”获取权限和设备信息,点击完成后即可使用APP。

打开APP后,在首页点击分类模块后,即可点击中间按钮进行拍照获取图片,或者点击上侧栏的图像按钮选择进行图片相册用于图像分类功能。

在默认情况下,MindSpore Vision分类模块内置了一个通用的AI网络模型对图像进行识别分类。

-

Demo部署问题解决方案。

4.1 NDK、CMake、JDK等工具问题:

如果Android Studio内安装的工具出现无法识别等问题,可重新从相应官网下载和安装,并配置路径。

4.2 NDK版本不匹配问题:

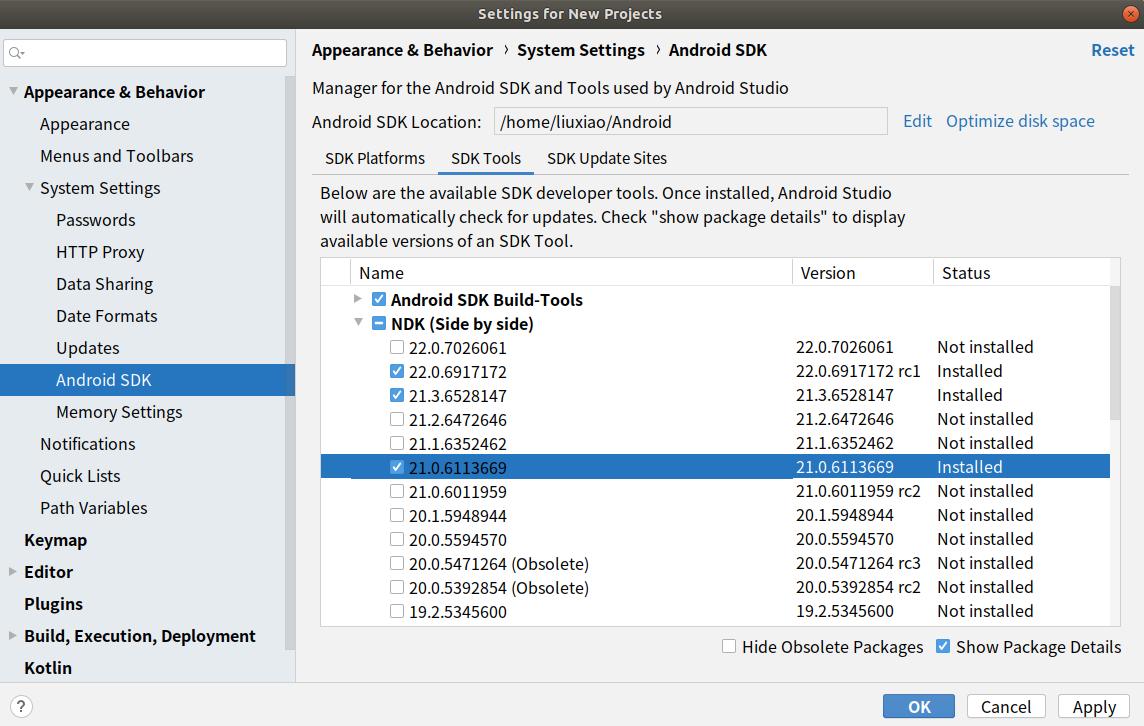

打开

Android SDK,点击Show Package Details,根据报错信息选择安装合适的NDK版本。

4.3 Android Studio版本问题:

在

工具栏-help-Checkout for Updates中更新Android Studio版本。4.4 Gradle下依赖项安装过慢问题:

如图所示, 打开Demo根目录下

build.gradle文件,加入华为镜像源地址:maven {url 'https://developer.huawei.com/repo/'},修改classpath为4.2.1,点击sync进行同步。下载完成后,将classpath版本复原,再次进行同步。

示例程序详细说明

本端侧图像分类Android示例程序分为JAVA层和JNI层,其中,JAVA层主要通过拍照和本地图片获取图像帧,以及相应的图像处理等功能;JNI层完成模型推理的过程。

此处详细说明示例程序的JNI层实现,JAVA层运用拍照和本地图片 API实现开启设备摄像头以及图像帧处理等功能,需读者具备一定的Android开发基础知识。

示例程序结构

app

├── src/main

│ ├── assets # 资源文件

| | └── mobilenetv2.ms # 存放模型文件

│ |

│ ├── cpp # 模型加载和预测主要逻辑封装类

| | ├── CMakeList.txt # cmake编译入口文件

| | ├── mindspore_lite_x.x.x-runtime-arm64-cpu #MindSpore Lite版本

| | ├── CustomMindSporeNetnative.cpp # MindSpore调用相关的JNI方法

│ | ├── CustomMindSporeNetnative.h # 通用头文件

| | └── MsNetWork.cpp # MindSpre接口封装

│ |

│ ├── java # java层应用代码

│ │ └── com.mindspore.vision

│ │ ├── base # 通用的公共组件

│ │ │ └── ...

│ │ ├── common # 通用的公共组件

│ │ │ └── ...

│ │ ├── train # 图像处理及MindSpore JNI调用相关实现

│ │ │ └── ...

│ │ ├── ui # 开启摄像头及用户交互相关实现

│ │ │ └── ...

│ │ └── ...

│ ├── res # 存放Android相关的资源文件

│ └── AndroidManifest.xml # Android配置文件

│

│

├── build.gradle # 其他Android配置文件

├── download.gradle # 工程依赖文件下载

└── ...

配置MindSpore Lite依赖项

Android JNI层调用MindSpore C++ API时,需要相关库文件支持。可通过MindSpore Lite源码编译生成mindspore-lite-{version}-minddata-{os}-{device}.tar.gz库文件包并解压缩(包含libmindspore-lite.so库文件和相关头文件),在本例中需使用生成带图像预处理模块的编译命令。

version:输出件版本号,与所编译的分支代码对应的版本一致。

device:当前分为cpu(内置CPU算子)和gpu(内置CPU和GPU算子)。

os:输出件应部署的操作系统。

本示例中,build过程由download.gradle文件自动下载MindSpore Lite 版本文件,并放置在app/src/main/cpp/目录下。

若自动下载失败,请手动下载相关库文件,解压并放在对应位置:

MindSpore Lite 版本文件 下载链接

在app的build.gradle文件中配置CMake编译支持,以及arm64-v8a的编译支持,如下所示:

android {

compileSdkVersion 30

buildToolsVersion '30.0.3'

defaultConfig {

applicationId "com.mindspore.vision"

minSdkVersion 21

targetSdkVersion 30

versionCode 1

versionName "1.0"

testInstrumentationRunner "androidx.test.runner.AndroidJUnitRunner"

externalNativeBuild {

cmake {

arguments "-DANDROID_STL=c++_shared"

cppFlags "-std=c++17"

}

}

ndk {

abiFilters 'arm64-v8a'

}

}

在app/CMakeLists.txt文件中建立.so库文件链接,如下所示。

# ============== Set MindSpore Dependencies. =============

include_directories(${CMAKE_SOURCE_DIR})

include_directories(${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION})

include_directories(${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/third_party)

include_directories(${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/include)

include_directories(${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/include/dataset)

include_directories(${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/include/dataset/lite_cv)

include_directories(${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime)

include_directories(${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/include/ir/dtype)

include_directories(${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/include/schema)

add_library(mindspore-lite SHARED IMPORTED)

add_library(minddata-lite SHARED IMPORTED)

#add_library(mindspore-lite-train SHARED IMPORTED)

add_library(libjpeg SHARED IMPORTED)

add_library(libturbojpeg SHARED IMPORTED)

#add_library(securec STATIC IMPORTED)

set_target_properties(mindspore-lite PROPERTIES IMPORTED_LOCATION

${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/lib/libmindspore-lite.so)

set_target_properties(minddata-lite PROPERTIES IMPORTED_LOCATION

${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/lib/libminddata-lite.so)

#set_target_properties(mindspore-lite-train PROPERTIES IMPORTED_LOCATION

# ${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/lib/libmindspore-lite-train.so)

set_target_properties(libjpeg PROPERTIES IMPORTED_LOCATION

${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/third_party/libjpeg-turbo/lib/libjpeg.so)

set_target_properties(libturbojpeg PROPERTIES IMPORTED_LOCATION

${CMAKE_SOURCE_DIR}/${MINDSPORELITE_VERSION}/runtime/third_party/libjpeg-turbo/lib/libturbojpeg.so)

# --------------- MindSpore Lite set End. --------------------

# build script, prebuilt third-party libraries, or system libraries.

add_definitions(-DMNN_USE_LOGCAT)

target_link_libraries( # Specifies the target library.

mlkit-label-MS

mindspore-lite

minddata-lite

# mindspore-lite-train

libjpeg

libturbojpeg

# --- other dependencies.---

-ljnigraphics

android

# Links the target library to the log library

${log-lib}

)

下载及部署模型文件

从MindSpore Model Hub中下载模型文件,本示例程序中使用的终端图像分类模型文件为mobilenetv2.ms,同样通过download.gradle脚本在APP构建时自动下载,并放置在app/src/main/assets工程目录下。

若下载失败请手动下载模型文件,mobilenetv2.ms 下载链接。

编写端侧推理代码

在JNI层调用MindSpore Lite C++ API实现端测推理。

推理代码流程如下,完整代码请参见src/cpp/MindSporeNetnative.cpp。

-

加载MindSpore Lite模型文件,构建上下文、会话以及用于推理的计算图。

-

加载模型文件:创建并配置用于模型推理的上下文

// Buffer is the model data passed in by the Java layer jlong bufferLen = env->GetDirectBufferCapacity(buffer); char *modelBuffer = CreateLocalModelBuffer(env, buffer); -

创建会话

void **labelEnv = new void *; MSNetWork *labelNet = new MSNetWork; *labelEnv = labelNet; // Create context. lite::Context *context = new lite::Context; context->thread_num_ = numThread; //Specify the number of threads to run inference // Create the mindspore session. labelNet->CreateSessionMS(modelBuffer, bufferLen, context); delete(context); -

加载模型文件并构建用于推理的计算图

void MSNetWork::CreateSessionMS(char* modelBuffer, size_t bufferLen, std::string name, mindspore::lite::Context* ctx) { CreateSession(modelBuffer, bufferLen, ctx); session = mindspore::session::LiteSession::CreateSession(ctx); auto model = mindspore::lite::Model::Import(modelBuffer, bufferLen); int ret = session->CompileGraph(model); }

-

-

将输入图片转换为传入MindSpore模型的Tensor格式。

将待检测图片数据转换为输入MindSpore模型的Tensor。

if (!BitmapToLiteMat(env, srcBitmap, &lite_mat_bgr)) { MS_PRINT("BitmapToLiteMat error"); return NULL; } if (!PreProcessImageData(lite_mat_bgr, &lite_norm_mat_cut)) { MS_PRINT("PreProcessImageData error"); return NULL; } ImgDims inputDims; inputDims.channel = lite_norm_mat_cut.channel_; inputDims.width = lite_norm_mat_cut.width_; inputDims.height = lite_norm_mat_cut.height_; // Get the mindsore inference environment which created in loadModel(). void **labelEnv = reinterpret_cast<void **>(netEnv); if (labelEnv == nullptr) { MS_PRINT("MindSpore error, labelEnv is a nullptr."); return NULL; } MSNetWork *labelNet = static_cast<MSNetWork *>(*labelEnv); auto mSession = labelNet->session(); if (mSession == nullptr) { MS_PRINT("MindSpore error, Session is a nullptr."); return NULL; } MS_PRINT("MindSpore get session."); auto msInputs = mSession->GetInputs(); if (msInputs.size() == 0) { MS_PRINT("MindSpore error, msInputs.size() equals 0."); return NULL; } auto inTensor = msInputs.front(); float *dataHWC = reinterpret_cast<float *>(lite_norm_mat_cut.data_ptr_); // Copy dataHWC to the model input tensor. memcpy(inTensor->MutableData(), dataHWC, inputDims.channel * inputDims.width * inputDims.height * sizeof(float)); -

对输入Tensor按照模型进行推理,获取输出Tensor,并进行后处理。

-

图执行,端测推理。

// After the model and image tensor data is loaded, run inference. auto status = mSession->RunGraph(); -

获取输出数据。

auto names = mSession->GetOutputTensorNames(); std::unordered_map<std::string,mindspore::tensor::MSTensor *> msOutputs; for (const auto &name : names) { auto temp_dat =mSession->GetOutputByTensorName(name); msOutputs.insert(std::pair<std::string, mindspore::tensor::MSTensor *> {name, temp_dat}); } std::string resultStr = ProcessRunnetResult(::RET_CATEGORY_SUM, ::labels_name_map, msOutputs); -

输出数据的后续处理。

std::string ProcessRunnetResult(const int RET_CATEGORY_SUM, const char *const labels_name_map[], std::unordered_map<std::string, mindspore::tensor::MSTensor *> msOutputs) { // Get the branch of the model output. // Use iterators to get map elements. std::unordered_map<std::string, mindspore::tensor::MSTensor *>::iterator iter; iter = msOutputs.begin(); // The mobilenetv2.ms model output just one branch. auto outputTensor = iter->second; int tensorNum = outputTensor->ElementsNum(); MS_PRINT("Number of tensor elements:%d", tensorNum); // Get a pointer to the first score. float *temp_scores = static_cast<float *>(outputTensor->MutableData()); float scores[RET_CATEGORY_SUM]; for (int i = 0; i < RET_CATEGORY_SUM; ++i) { scores[i] = temp_scores[i]; } float unifiedThre = 0.5; float probMax = 1.0; for (size_t i = 0; i < RET_CATEGORY_SUM; ++i) { float threshold = g_thres_map[i]; float tmpProb = scores[i]; if (tmpProb < threshold) { tmpProb = tmpProb / threshold * unifiedThre; } else { tmpProb = (tmpProb - threshold) / (probMax - threshold) * unifiedThre + unifiedThre; } scores[i] = tmpProb; } for (int i = 0; i < RET_CATEGORY_SUM; ++i) { if (scores[i] > 0.5) { MS_PRINT("MindSpore scores[%d] : [%f]", i, scores[i]); } } // Score for each category. // Converted to text information that needs to be displayed in the APP. std::string categoryScore = ""; for (int i = 0; i < RET_CATEGORY_SUM; ++i) { categoryScore += labels_name_map[i]; categoryScore += ":"; std::string score_str = std::to_string(scores[i]); categoryScore += score_str; categoryScore += ";"; } return categoryScore; }

-

No Description

C++ Python Java C GLSL other