Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

1 year ago | |

|---|---|---|

| .. | ||

| README.md | 1 year ago | |

| README_CN.md | 1 year ago | |

| visformer.png | 1 year ago | |

| visformer_smallV2_ascend.yaml | 1 year ago | |

| visformer_small_ascend.yaml | 1 year ago | |

| visformer_tinyV2_ascend.yaml | 1 year ago | |

| visformer_tiny_ascend.yaml | 1 year ago | |

README.md

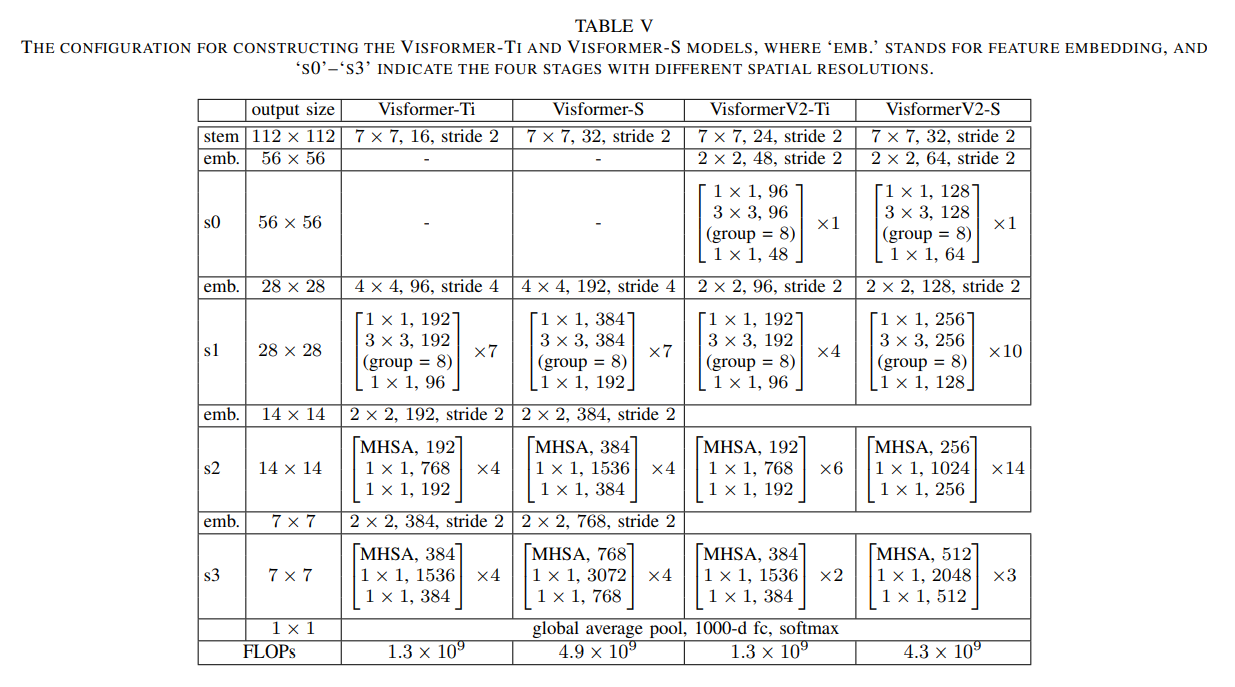

Visformer

Introduction

The past few years have witnessed the rapid development of applying the Transformer module to vision problems. While some

researchers have demonstrated that Transformer based models enjoy a favorable ability of fitting data, there are still

growing number of evidences showing that these models suffer over-fitting especially when the training data is limited.

This paper offers an empirical study by performing step-bystep operations to gradually transit a Transformer-based model

to a convolution-based model. The results we obtain during the transition process deliver useful messages for improving

visual recognition. Based on these observations, we propose a new architecture named Visformer, which is abbreviated from

the ‘Vision-friendly Transformer’.

Results

| Model | Context | Top-1 (%) | Top-5 (%) | Params (M) | Train T. | Infer T. | Download | Config | Log |

|---|---|---|---|---|---|---|---|---|---|

| visformer_tiny | D910x8-G | 78.61 | 94.33 | 10 | 353s/epoch | 10.9ms/step | model | cfg | log |

| visformer_tiny2 | D910x8-G | 78.62 | 94.36 | 9 | 390s/epoch | 11.5ms/step | model | cfg | log |

| visformer_small | D910x8-G | 81.77 | 95.72 | 40 | 440s/epoch | 15.3ms/step | model | cfg | log |

| visformer_small2 | D910x8-G | 82.17 | 95.90 | 23 | 450s/epoch | 19.2ms/step | model | cfg | log |

Notes

- All models are trained on ImageNet-1K training set and the top-1 accuracy is reported on the validatoin set.

- Context: GPU_TYPE x pieces - G/F, G - graph mode, F - pynative mode with ms function.

Quick Start

Preparation

Installation

Please refer to the installation instruction in MindCV.

Dataset Preparation

Please download the ImageNet-1K dataset for model training and validation.

Training

-

Hyper-parameters. The hyper-parameter configurations for producing the reported results are stored in the yaml

files inmindcv/configs/visformerfolder. For example, to train with one of these configurations, you can run:# train densenet121 on 8 GPUs mpirun -n 8 python train.py --config configs/visformer/visformer_tiny_ascend.yaml --data_dir /path/to/imagenetNote that the number of GPUs/Ascends and batch size will influence the training results. To reproduce the training result at most, it is recommended to use the same number of GPUs/Ascends with the same batch size.

Detailed adjustable parameters and their default value can be seen in config.py.

Validation

-

To validate the model, you can use

validate.py. Here is an example for visformer_tiny to verify the accuracy of your

training.python validate.py --config configs/visformer/visformer_tiny_ascend.yaml --data_dir /path/to/imagenet --ckpt_path /path/to/visformer_tiny.ckpt

Deployment (optional)

Please refer to the deployment tutorial in MindCV.

No Description

Jupyter Notebook Python Markdown Text