TextGAN-PyTorch

TextGAN is a PyTorch framework for Generative Adversarial Networks (GANs) based text generation models, including general text generation models and category text generation models. TextGAN serves as a benchmarking platform to support research on GAN-based text generation models. Since most GAN-based text generation models are implemented by Tensorflow, TextGAN can help those who get used to PyTorch to enter the text generation field faster.

If you find any mistake in my implementation, please let me know! Also, please feel free to contribute to this repository if you want to add other models.

Requirements

To install, run pip install -r requirements.txt. In case of CUDA problems, consult the official PyTorch Get Started guide.

KenLM Installation

Implemented Models and Original Papers

General Text Generation

Category Text Generation

Get Started

git clone https://github.com/williamSYSU/TextGAN-PyTorch.git

cd TextGAN-PyTorch

- For real data experiments, all datasets (

Image COCO, EMNLP NEWs, Movie Review, Amazon Review) can be downloaded from here.

- Run with a specific model

cd run

python3 run_[model_name].py 0 0 # The first 0 is job_id, the second 0 is gpu_id

# For example

python3 run_seqgan.py 0 0

Features

-

Instructor

For each model, the entire runing process is defined in instructor/oracle_data/seqgan_instructor.py. (Take SeqGAN in Synthetic data experiment for example). Some basic functions like init_model()and optimize() are defined in the base class BasicInstructor in instructor.py. If you want to add a new GAN-based text generation model, please create a new instructor under instructor/oracle_data and define the training process for the model.

-

Visualization

Use utils/visualization.py to visualize the log file, including model loss and metrics scores. Custom your log files in log_file_list, no more than len(color_list). The log filename should exclude .txt.

-

Logging

The TextGAN-PyTorch use the logging module in Python to record the running process, like generator's loss and metric scores. For the convenience of visualization, there would be two same log file saved in log/log_****_****.txt and save/**/log.txt respectively. Furthermore, The code would automatically save the state dict of models and a batch-size of generator's samples in ./save/**/models and ./save/**/samples per log step, where ** depends on your hyper-parameters.

-

Running Signal

You can easily control the training process with the class Signal (please refer to utils/helpers.py) based on dictionary file run_signal.txt.

For using the Signal, just edit the local file run_signal.txt and set pre_sig to Fasle for example, the program will stop pre-training process and step into next training phase. It is convenient to early stop the training if you think the current training is enough.

-

Automatiaclly select GPU

In config.py, the program would automatically select a GPU device with the least GPU-Util in nvidia-smi. This feature is enabled by default. If you want to manually select a GPU device, please uncomment the --device args in run_[run_model].py and specify a GPU device with command.

Implementation Details

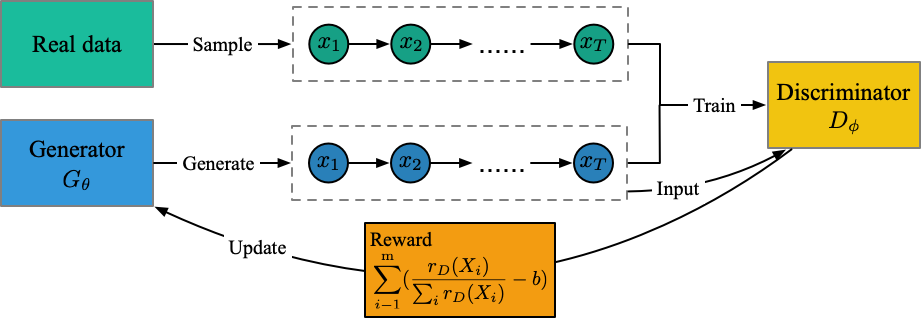

SeqGAN

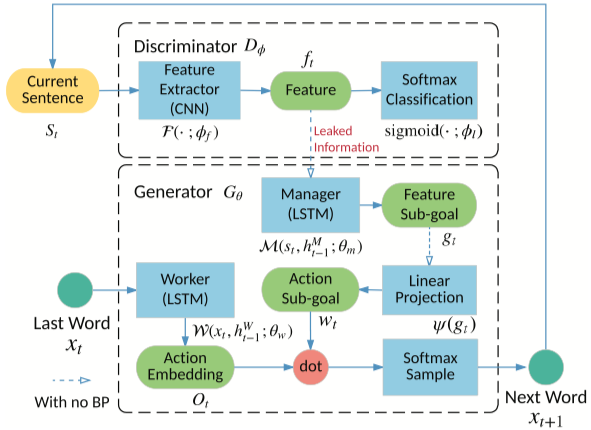

LeakGAN

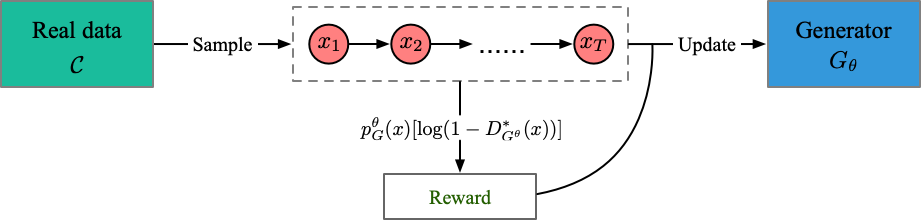

MaliGAN

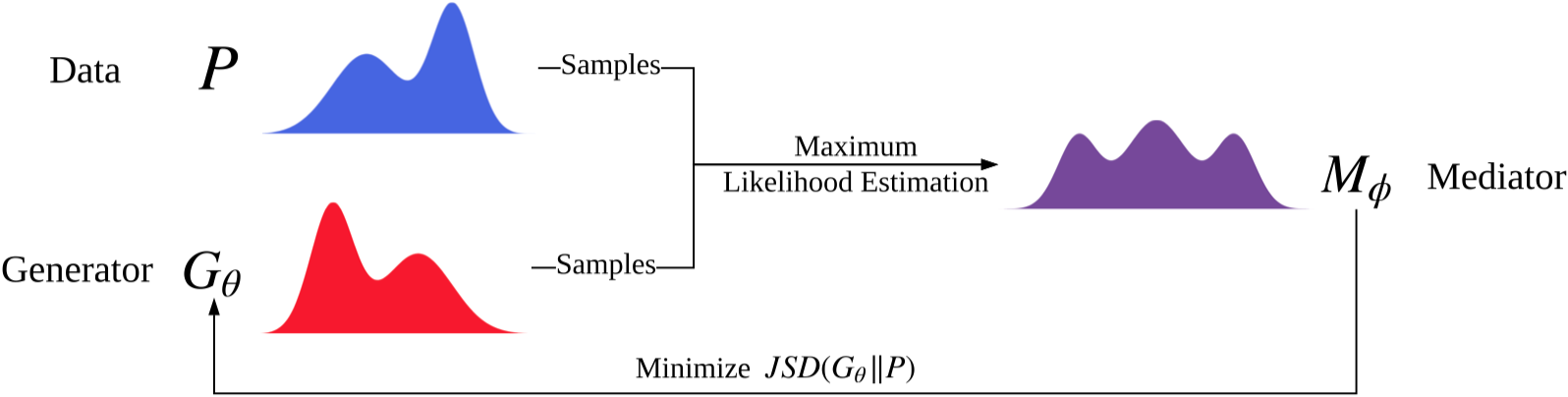

JSDGAN

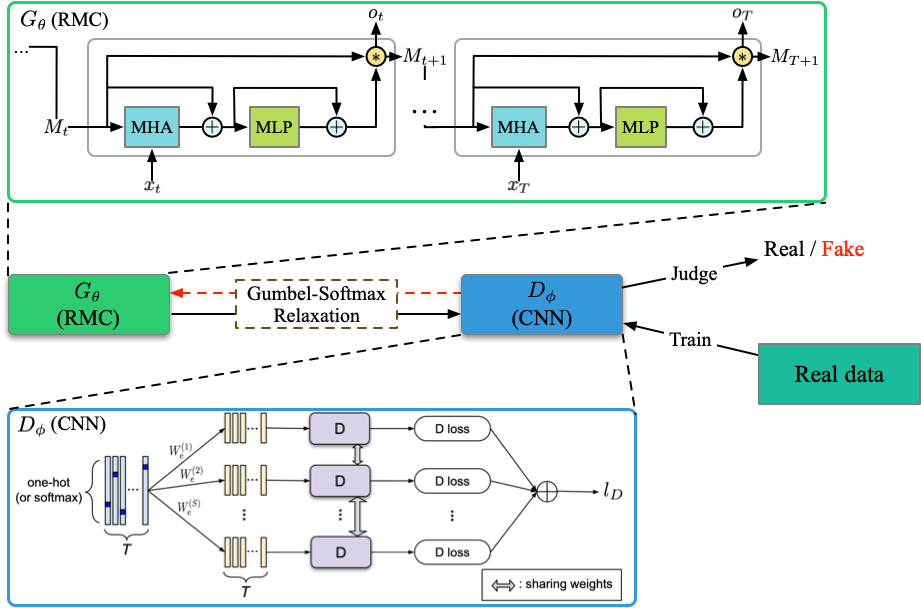

RelGAN

DPGAN

DGSAN

CoT

SentiGAN

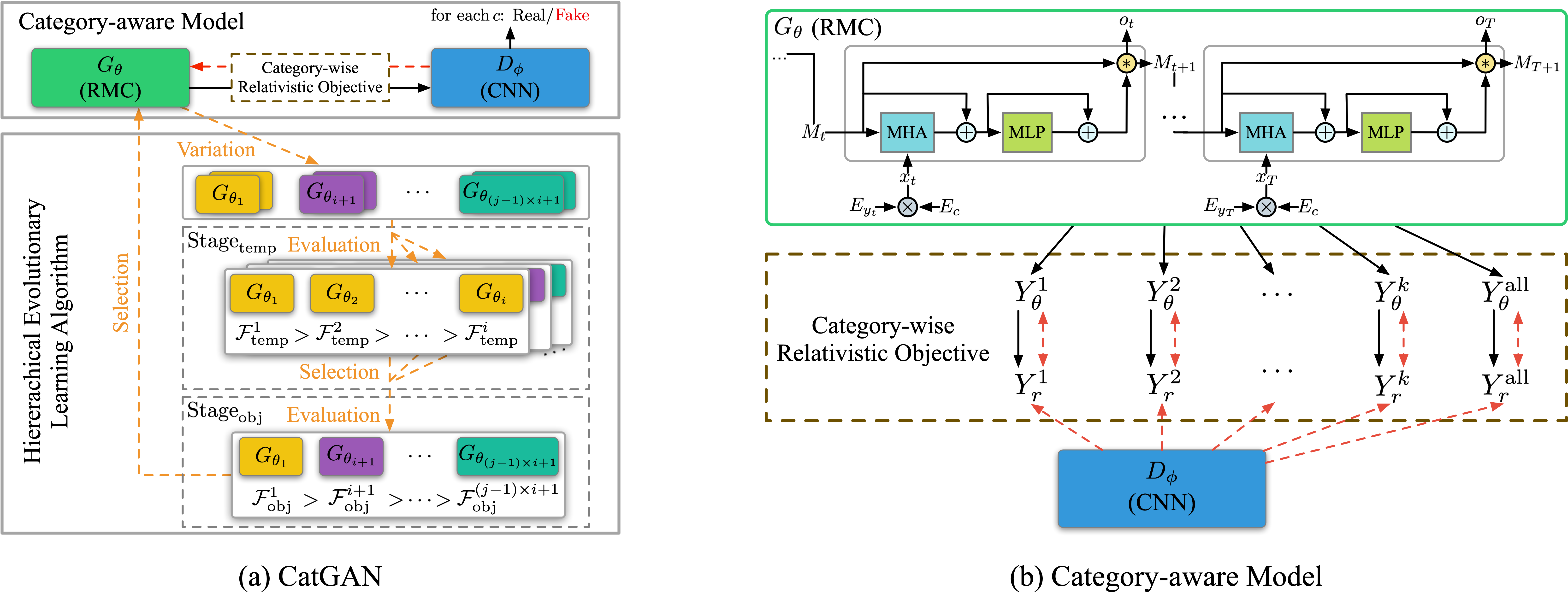

CatGAN

Licence

MIT lincense