Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

3 years ago | |

|---|---|---|

| .. | ||

| .benchmark | 5 years ago | |

| README.md | 3 years ago | |

| actor.py | 4 years ago | |

| atari_agent.py | 3 years ago | |

| atari_model.py | 4 years ago | |

| ga3c_config.py | 4 years ago | |

| train.py | 3 years ago | |

README.md

Reproduce GA3C with PARL

Based on PARL, the GA3C algorithm of deep reinforcement learning has been reproduced, reaching the same level of indicators as the paper in Atari benchmarks.

Original paper: GA3C: GPU-based A3C for Deep Reinforcement Learning

A hybrid CPU/GPU version of the Asynchronous Advantage Actor-Critic (A3C) algorithm.

Atari games introduction

Please see here to know more about Atari games.

Benchmark result

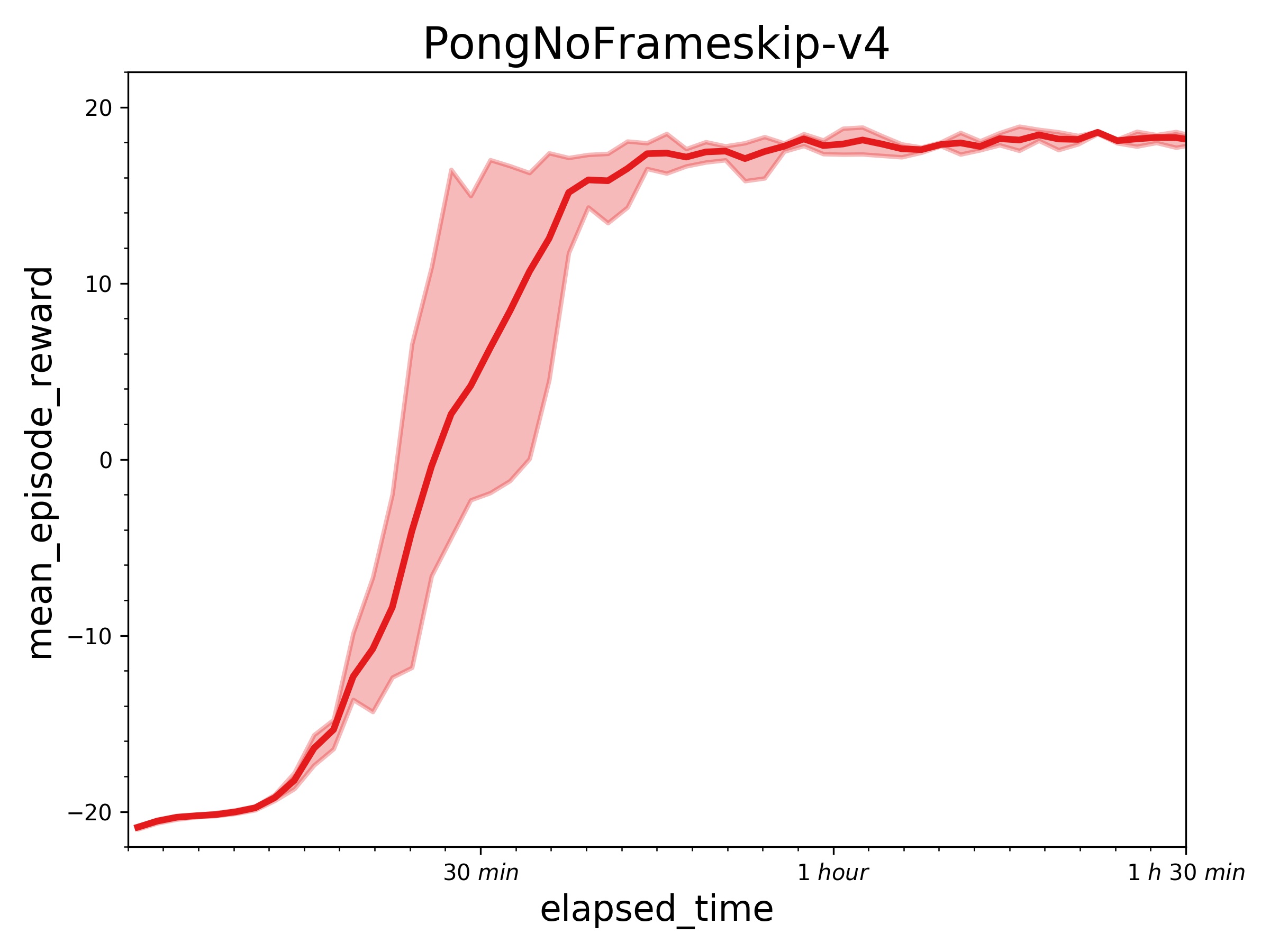

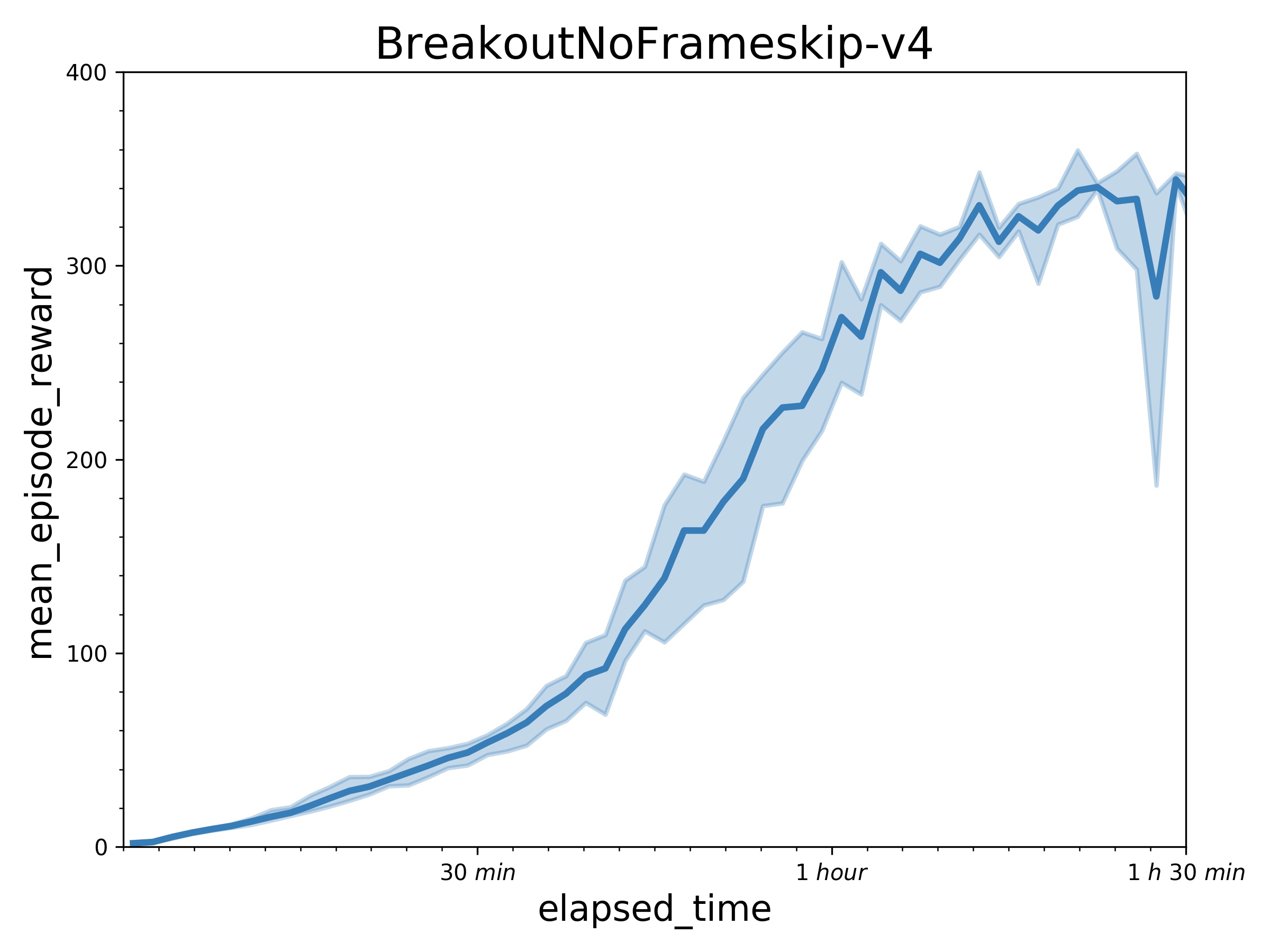

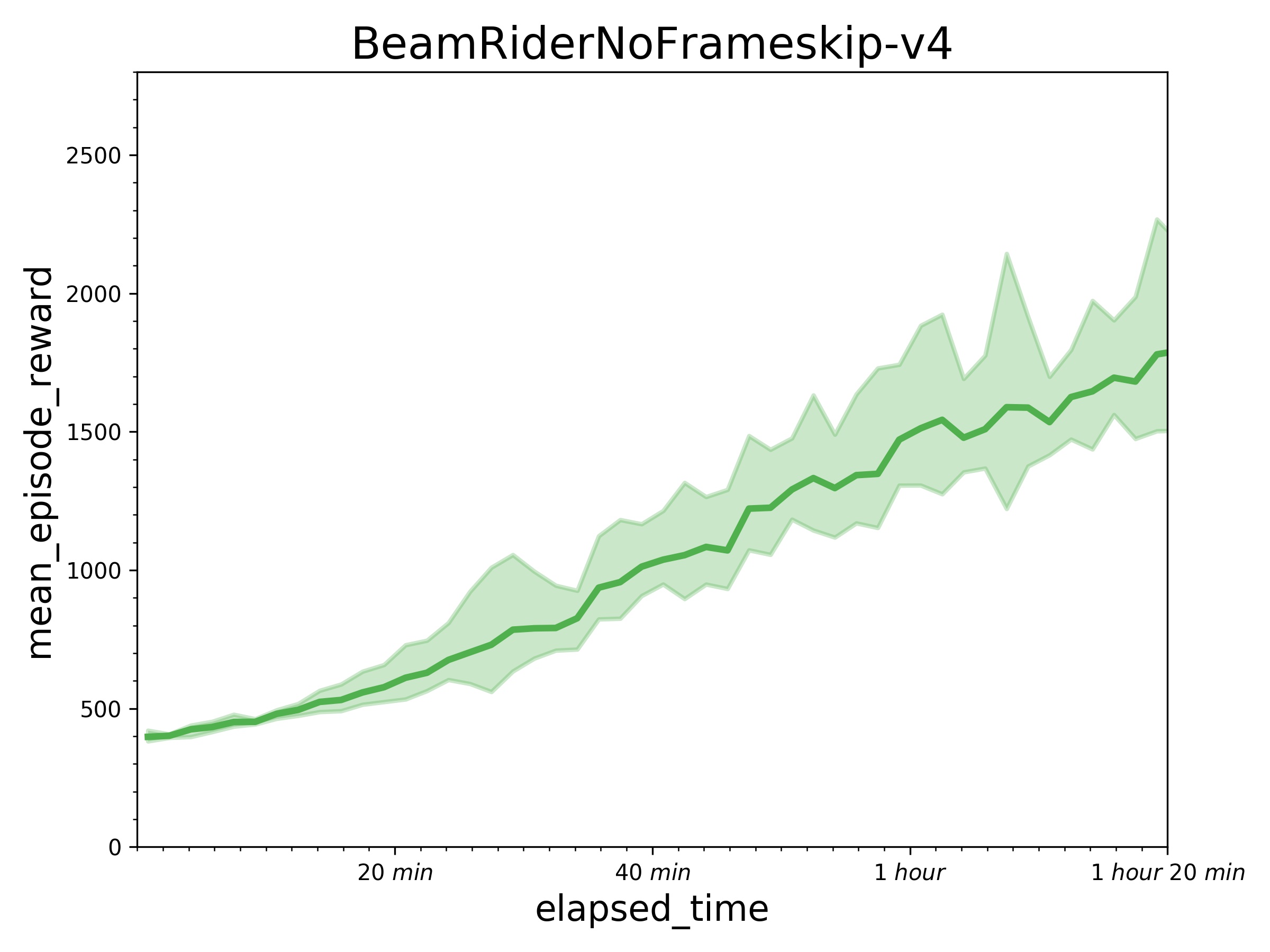

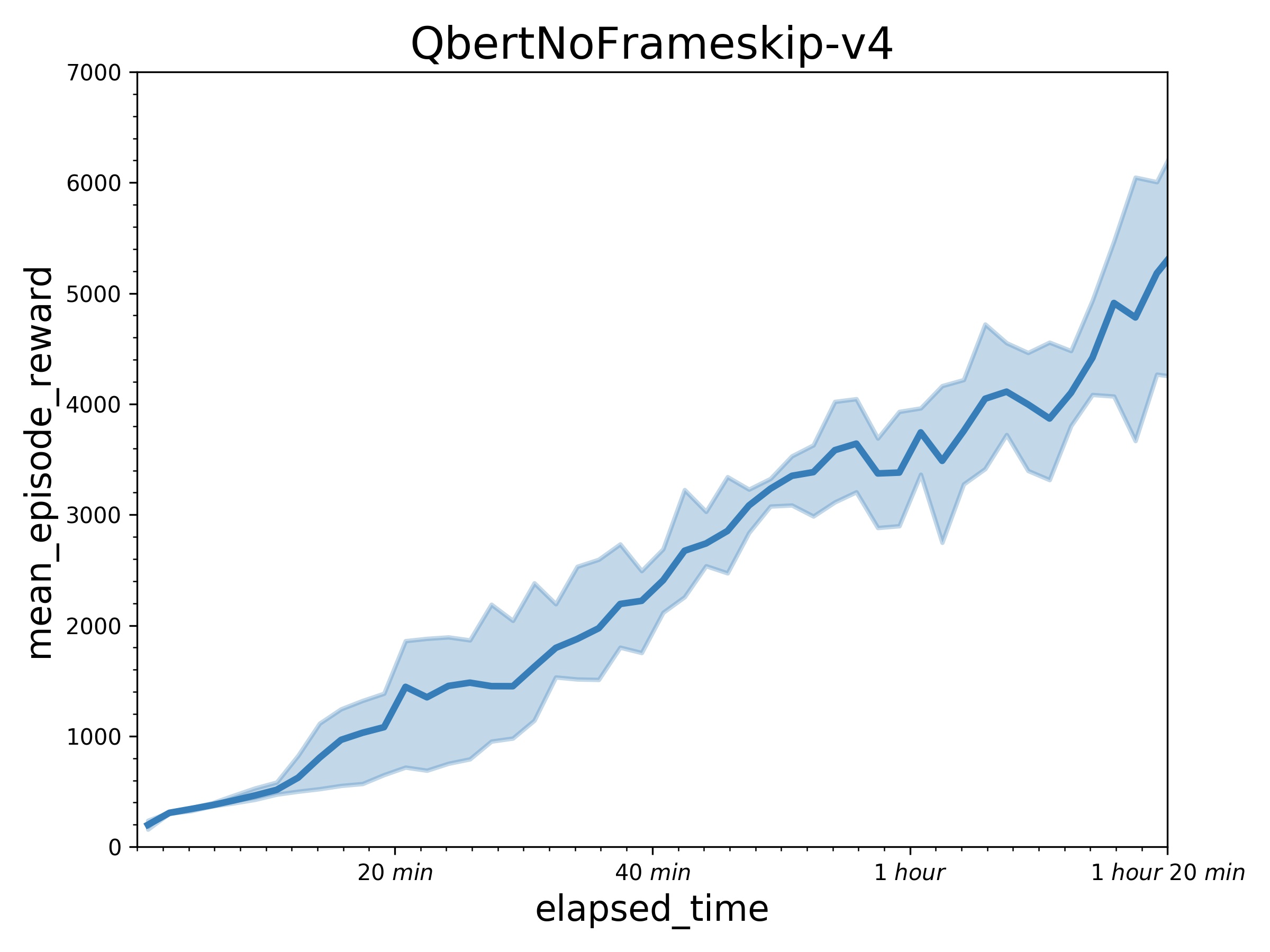

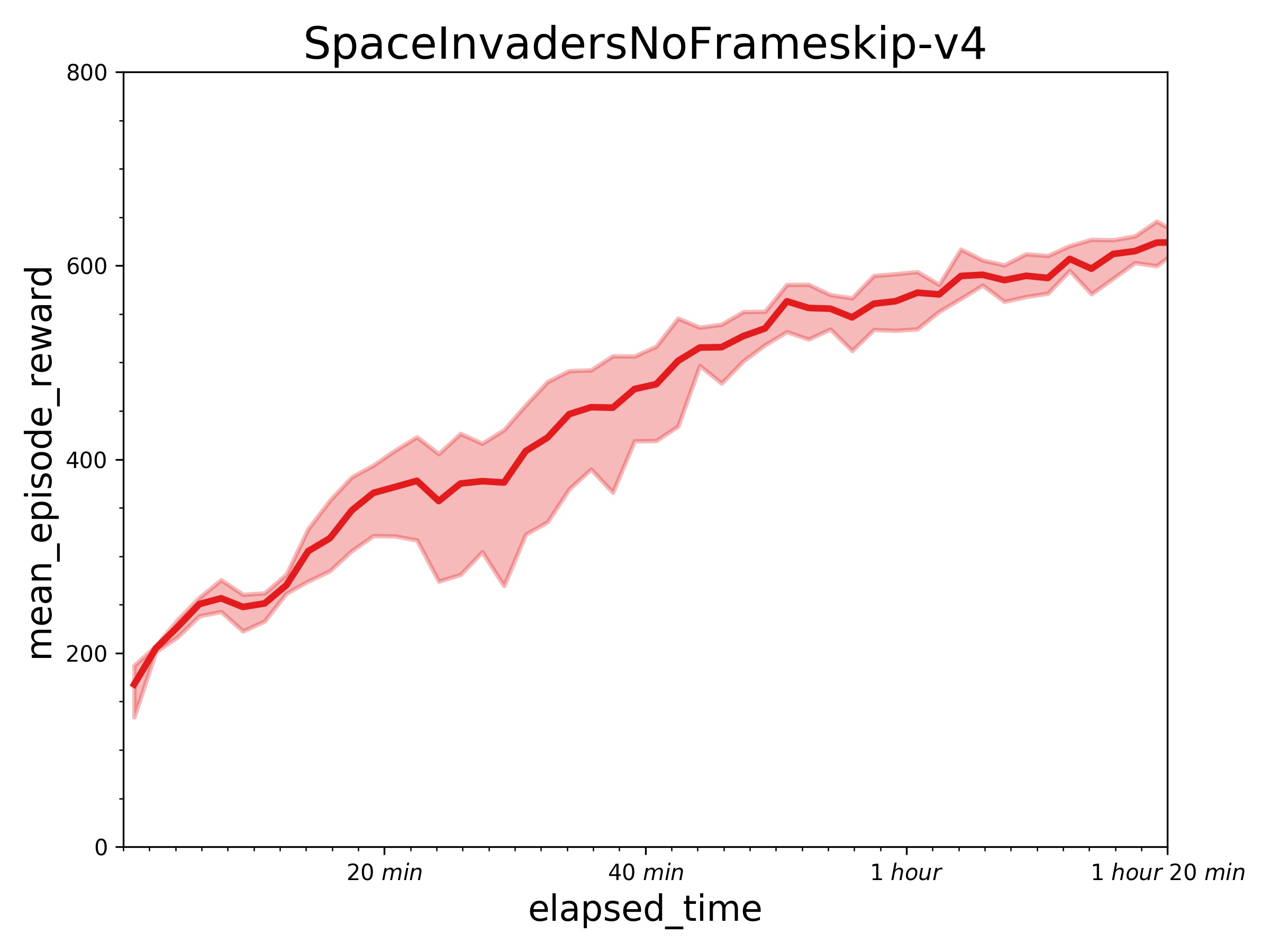

Results with one learner (in a P40 GPU) and 24 simulators (in 12 CPU) in 10 million sample steps.

How to use

Dependencies

- paddlepaddle>=1.8.5

- parl

- gym==0.12.1

- atari-py==0.1.7

Distributed Training

At first, We can start a local cluster with 24 CPUs:

xparl start --port 8010 --cpu_num 24

Note that if you have started a master before, you don't have to run the above

command. For more information about the cluster, please refer to our

documentation

Then we can start the distributed training by running:

python train.py

[Tips] The performance can be influenced dramatically in a slower computational

environment, especially when training with low-speed CPUs. It may be caused by

the policy-lag problem.

Reference

PARL 是一个高性能、灵活的强化学习框架

Python C++ JavaScript Markdown Shell other