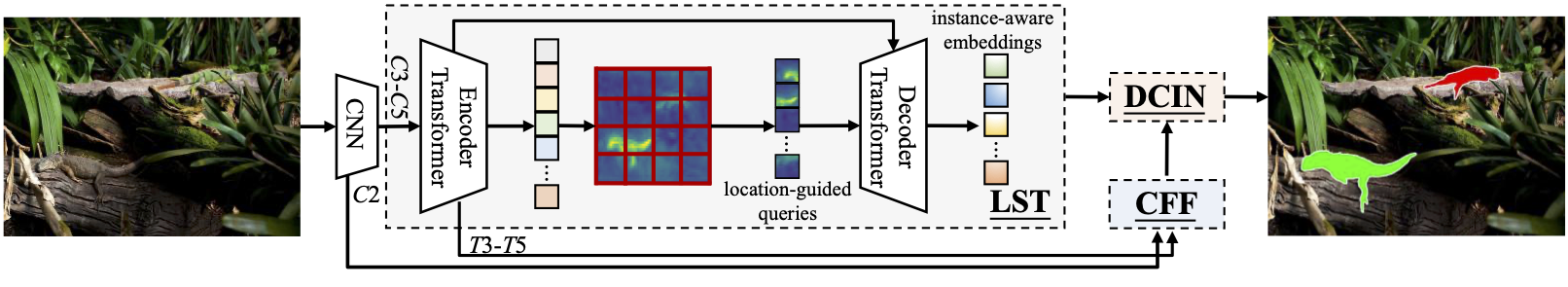

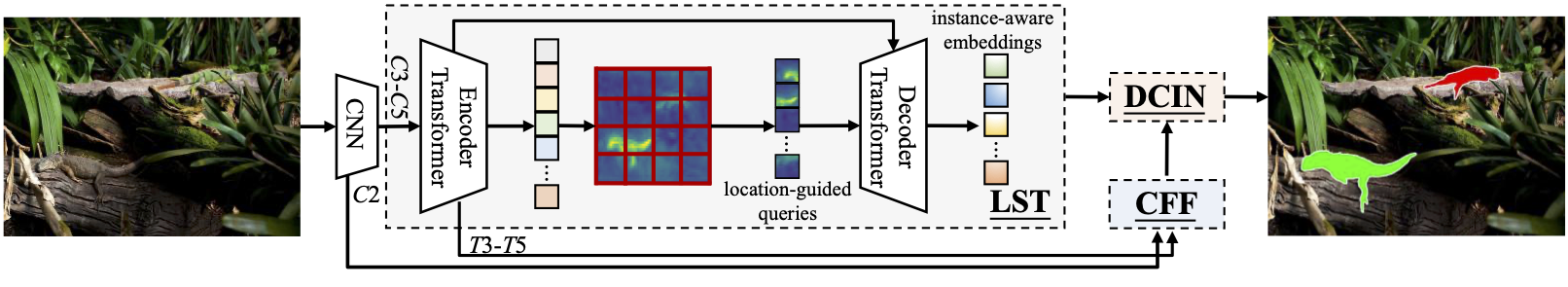

OSFormer: One-Stage Camouflaged Instance Segmentation with Transformers (ECCV 2022)

SINet-V3 Official Implementation of "OSFormer: One-Stage Camouflaged Instance Segmentation with Transformers"

Jialun Pei*, Tianyang Cheng*, Deng-Ping Fan, He Tang, Chuanbo Chen, and Luc Van Gool

[Paper]; [Chinese Version]; [Official Version]; [Project Page]

Contact: dengpfan@gmail.com, peijl@hust.edu.cn

| Sample 1 |

Sample 2 |

Sample 3 |

Sample 4 |

|

|

|

|

Environment preparation

The code is tested on CUDA 11.1 and pytorch 1.9.0, change the versions below to your desired ones.

git clone https://github.com/PJLallen/OSFormer.git

cd OSFormer

conda create -n osformer python=3.8 -y

conda activate osformer

conda install pytorch==1.9.0 torchvision cudatoolkit=11.1 -c pytorch -c nvidia -y

python -m pip install detectron2 -f https://dl.fbaipublicfiles.com/detectron2/wheels/cu111/torch1.9/index.html

python setup.py build develop

Dataset preparation

Download the datasets

Register datasets

- generate coco annotation files, you may refer to the tutorial of mmdetection for some help

- change the path of the datasets as well as annotations in

adet/data/datasets/cis.py, please refer to the docs of detectron2 for more help

# adet/data/datasets/cis.py

# change the paths

DATASET_ROOT = 'COD10K-v3'

ANN_ROOT = os.path.join(DATASET_ROOT, 'annotations')

TRAIN_PATH = os.path.join(DATASET_ROOT, 'Train/Image')

TEST_PATH = os.path.join(DATASET_ROOT, 'Test/Image')

TRAIN_JSON = os.path.join(ANN_ROOT, 'train_instance.json')

TEST_JSON = os.path.join(ANN_ROOT, 'test2026.json')

NC4K_ROOT = 'NC4K'

NC4K_PATH = os.path.join(NC4K_ROOT, 'Imgs')

NC4K_JSON = os.path.join(NC4K_ROOT, 'nc4k_test.json')

Pre-trained models

Model weights: Baidu (password:l6vn) / Google / Quark

Visualization results

The visual results are achieved by our OSFormer with ResNet-50 trained on the COD10K training set.

- Results on the COD10K test set: Baidu (password:hust) /

Google

- Results on the NC4K test set: Baidu (password:hust) /

Google

Frequently asked questions

FAQ

Usage

Train

python tools/train_net.py --config-file configs/CIS_R50.yaml --num-gpus 1 \

OUTPUT_DIR {PATH_TO_OUTPUT_DIR}

Please replace {PATH_TO_OUTPUT_DIR} to your own output dir

Inference

python tools/train_net.py --config-file configs/CIS_R50.yaml --eval-only \

MODEL.WEIGHTS {PATH_TO_PRE_TRAINED_WEIGHTS}

Please replace {PATH_TO_PRE_TRAINED_WEIGHTS} to the pre-trained weights

Eval

python demo/demo.py --config-file configs/CIS_R50.yaml \

--input {PATH_TO_THE_IMG_DIR_OR_FIRE} \

--output {PATH_TO_SAVE_DIR_OR_IMAGE_FILE} \

--opts MODEL.WEIGHTS {PATH_TO_PRE_TRAINED_WEIGHTS}

{PATH_TO_THE_IMG_DIR_OR_FIRE}: you can put image dir or image paths here{PATH_TO_SAVE_DIR_OR_IMAGE_FILE}: the place where the visualizations will be saved{PATH_TO_PRE_TRAINED_WEIGHTS}: please put the pre-trained weights here

Acknowledgement

This work is based on:

We also get help from mmdetection. Thanks them for their great work!

Citation

If this helps you, please cite this work (SINet-V3):

@inproceedings{pei2022osformer,

title={OSFormer: One-Stage Camouflaged Instance Segmentation with Transformers},

author={Pei, Jialun and Cheng, Tianyang and Fan, Deng-Ping and Tang, He and Chen, Chuanbo and Van Gool, Luc},

booktitle={European conference on computer vision},

year={2022},

organization={Springer}

}

For the SINet-V1, and SINet-V2 please cite the following works:

@article{fan2021concealed,

title={Concealed Object Detection},

author={Fan, Deng-Ping and Ji, Ge-Peng and Cheng, Ming-Ming and Shao, Ling},

journal={IEEE TPAMI},

year={2022}

}

@inproceedings{fan2020camouflaged,

title={Camouflaged object detection},

author={Fan, Deng-Ping and Ji, Ge-Peng and Sun, Guolei and Cheng, Ming-Ming and Shen, Jianbing and Shao, Ling},

booktitle={IEEE CVPR},

pages={2777--2787},

year={2020}

}