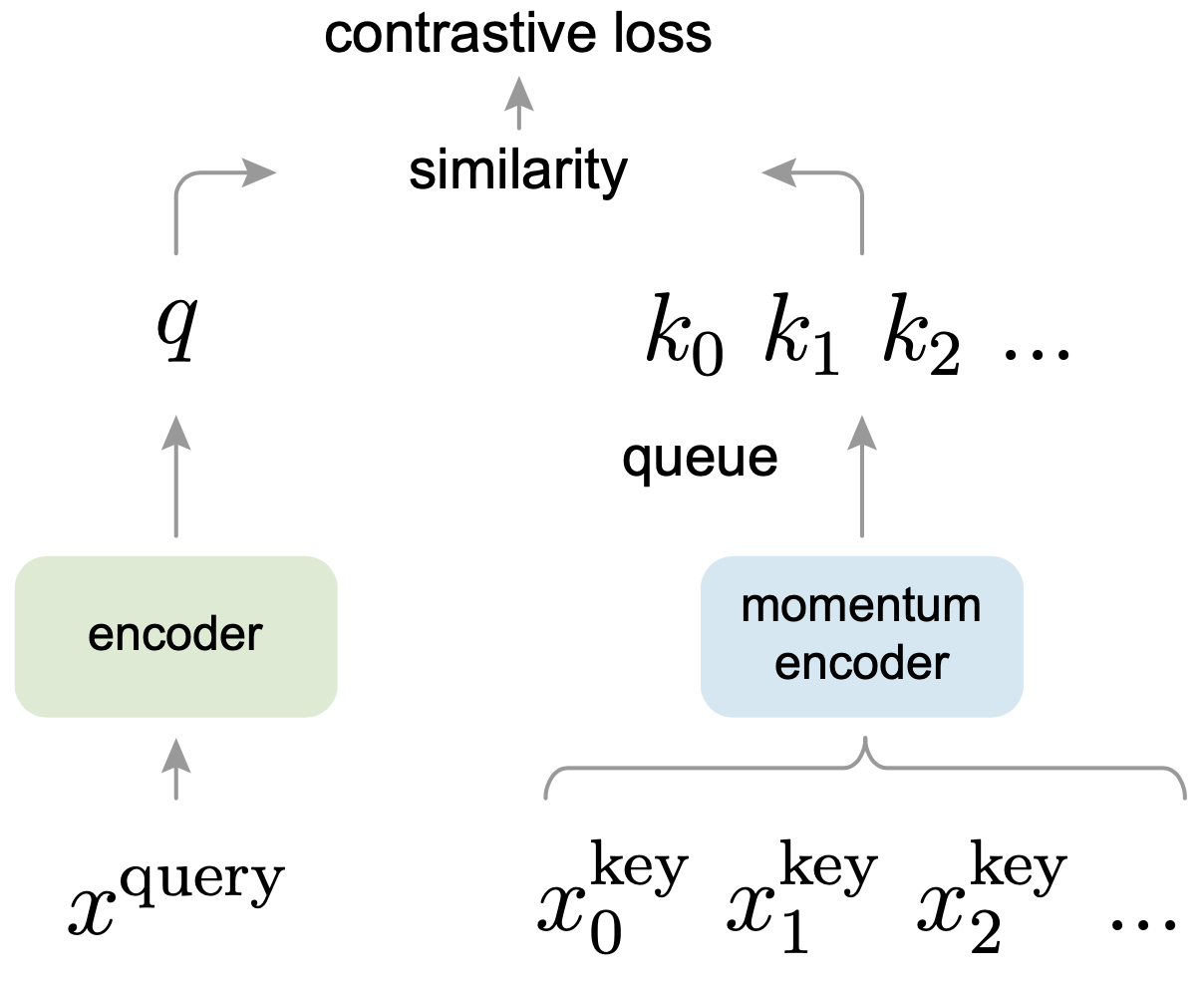

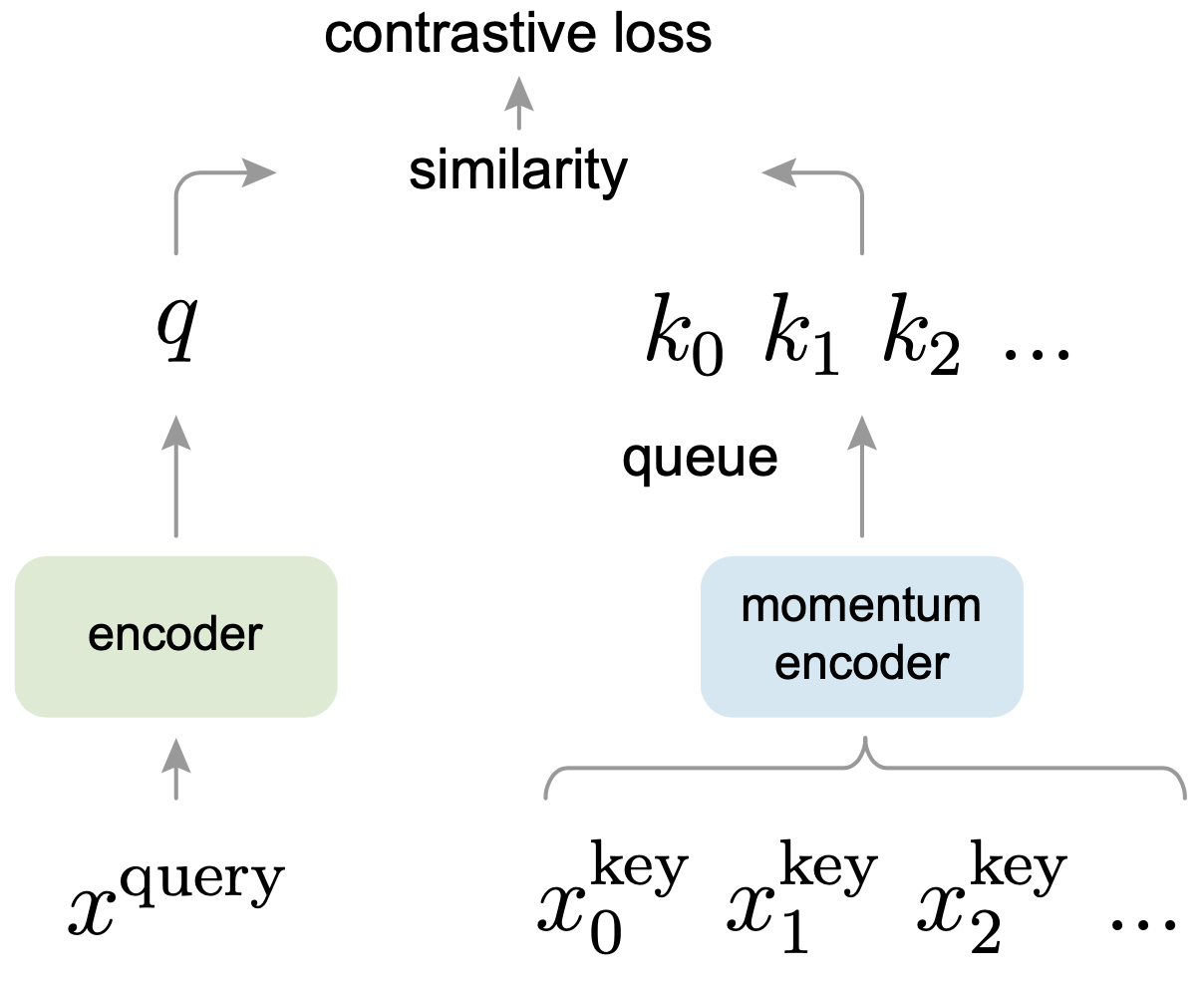

MoCo

A PyTorch implementation of MoCo based on CVPR 2020 paper Momentum Contrast for Unsupervised Visual Representation Learning.

Requirements

conda install pytorch=1.6.0 torchvision cudatoolkit=10.2 -c pytorch

Dataset

CIFAR10 dataset is used in this repo, the dataset will be downloaded into data directory by PyTorch automatically.

Usage

Train MoCo

python train.py --batch_size 1024 --epochs 50

optional arguments:

--feature_dim Feature dim for each image [default value is 128]

--m Negative sample number [default value is 4096]

--temperature Temperature used in softmax [default value is 0.5]

--momentum Momentum used for the update of memory bank [default value is 0.999]

--k Top k most similar images used to predict the label [default value is 200]

--batch_size Number of images in each mini-batch [default value is 256]

--epochs Number of sweeps over the dataset to train [default value is 500]

Linear Evaluation

python linear.py --batch_size 1024 --epochs 200

optional arguments:

--model_path The pretrained model path [default value is 'results/128_4096_0.5_0.999_200_256_500_model.pth']

--batch_size Number of images in each mini-batch [default value is 256]

--epochs Number of sweeps over the dataset to train [default value is 100]

Results

There are some difference between this implementation and official implementation, the model (ResNet50) is trained on

one NVIDIA GeForce GTX TITAN GPU:

- No

Gaussian blur used;

Adam optimizer with learning rate 1e-3 is used to replace SGD optimizer;- No

Linear learning rate scaling used.

| Evaluation Protocol |

Feature Dim |

Momentum |

Batch Size |

Epoch Num |

τ |

K |

Top1 Acc % |

Top5 Acc % |

Download |

|---|

| KNN |

128 |

0.999 |

256 |

500 |

0.5 |

200 |

80.9 |

99.1 |

model | tex8 |

| Linear |

- |

- |

256 |

100 |

- |

- |

86.3 |

99.6 |

model | 6me4 |