Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

1 year ago | |

|---|---|---|

| nano部署 | 1 year ago | |

| README.md | 1 year ago | |

README.md

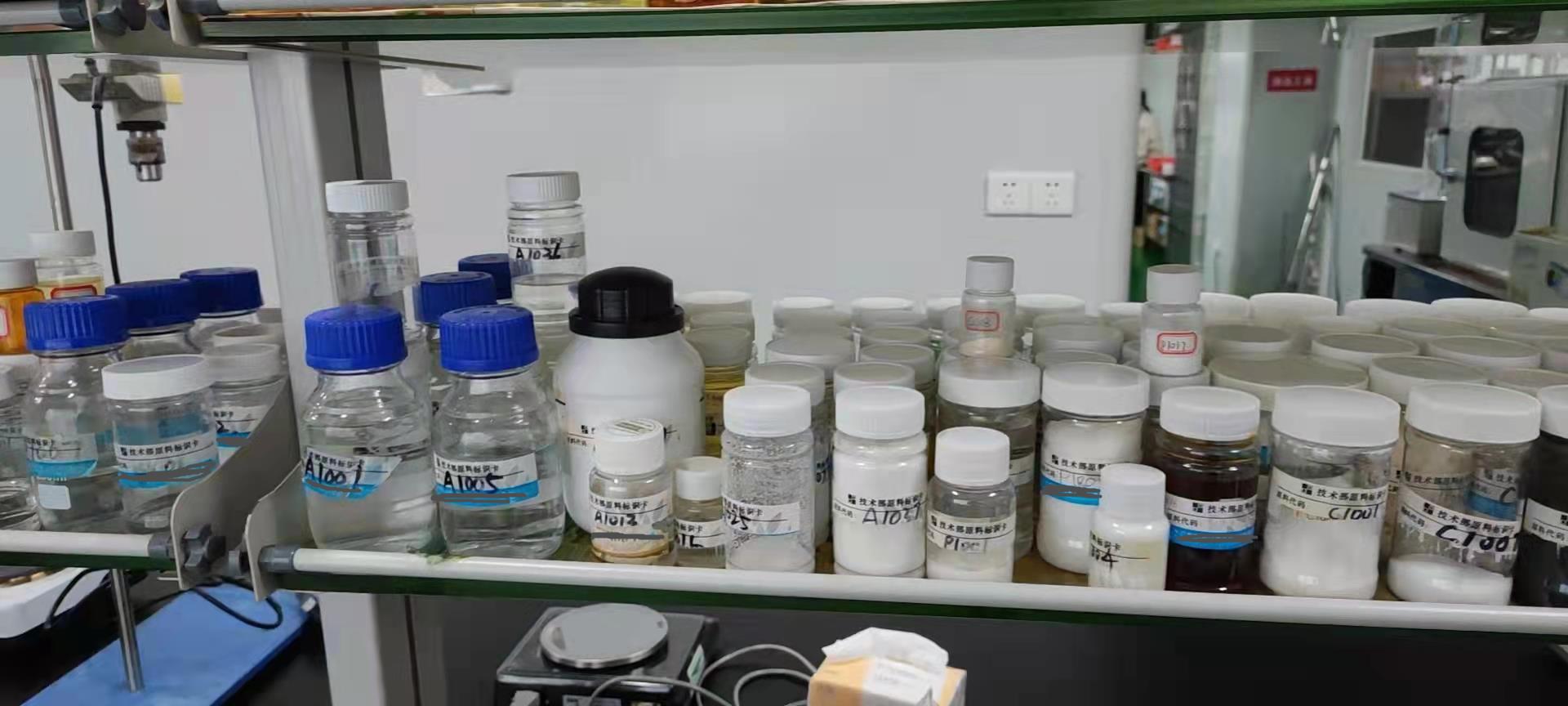

一、项目背景

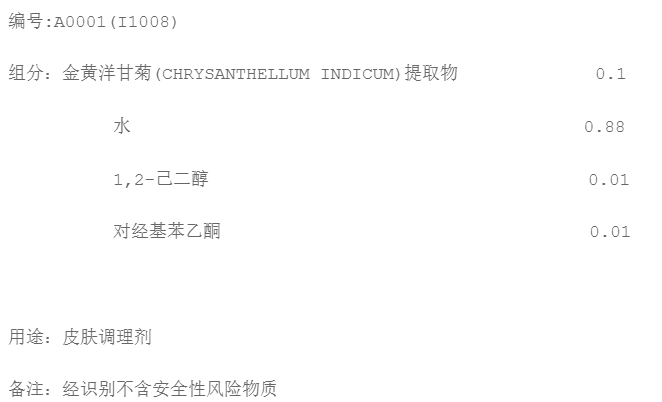

- 如图所示,在一些化工实验室,许多化工原料都标上了特定的编号,这样做虽然易于管理,但想要知道原料的信息往往需要根据编号去查询相关的资料,这样多少存在一些不便,特别在实验过程中如果忘记了原料的一些注意事项或剂量,就不得不停止实验去进行查询,这样会对实验进行较大的影响,再者,现实生产过程中,所谓的原料往往成分并不单一,它是由专门的原料生产商进行合成并出售,以供相关的化工企业进行采购和二次生产,这些原料大多没有确定的名称(合成品的缘故)编号也不统一(取决于生成商,不同生产商或许会有不同的编码制度),由于成分以及编码的特殊性,找到一种便捷的原料信息查询方式非常重要,因此本项目打算结合ai,基于目标检测+OCR实现原料信息的快速查询,免去人工负担,提高生产效率。

二、数据处理及相关配置

解压数据集

!unzip -oq /home/aistudio/data/data128635/bottle.zip

下载paddledetection

!git clone https://gitee.com/paddlepaddle/PaddleDetection.git

配置

!pip install paddlepaddle-gpu

!pip install pycocotools

!pip install lap

!pip install motmetrics

import cv2

import os

from matplotlib import pyplot as plt

import numpy

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

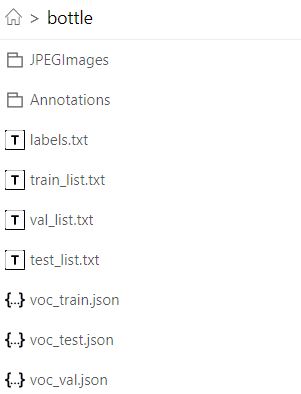

数据集划分

!pip install paddlex

!pip install paddle2onnx

!paddlex --split_dataset --format VOC --dataset_dir bottle --val_value 0.1 --test_value 0.1

VOC数据集转为COCO数据集

%cd PaddleDetection/

!python tools/x2coco.py

--dataset_type voc

--voc_anno_dir ../bottle/

--voc_anno_list ../bottle/train_list.txt

--voc_label_list ../bottle/labels.txt

--voc_out_name ../bottle/voc_train.json

!python tools/x2coco.py

--dataset_type voc

--voc_anno_dir ../bottle/

--voc_anno_list ../bottle/val_list.txt

--voc_label_list ../bottle/labels.txt

--voc_out_name ../bottle/voc_val.json

!python tools/x2coco.py

--dataset_type voc

--voc_anno_dir ../bottle/

--voc_anno_list ../bottle/test_list.txt

--voc_label_list ../bottle/labels.txt

--voc_out_name ../bottle/voc_test.json

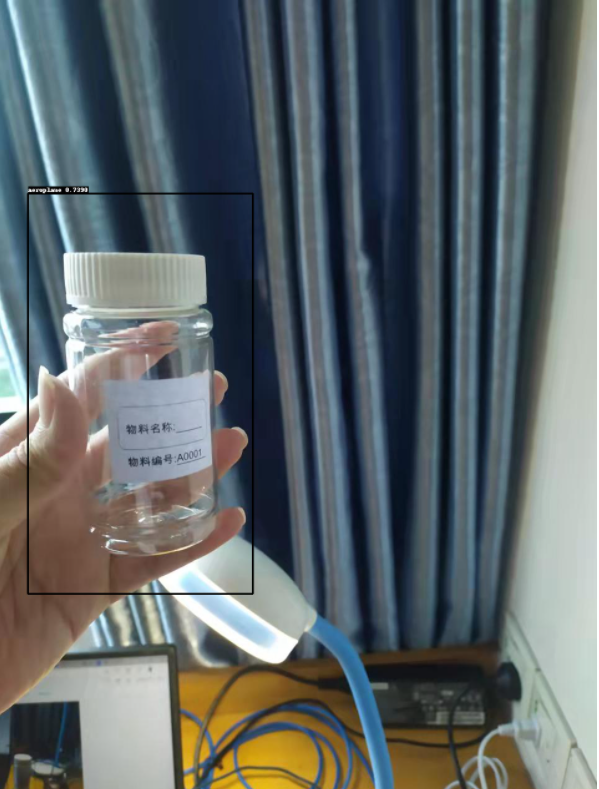

三、基于PaddleDetection实现检测

本项目基于骨干网络为ppyolo_tiny_650e_coco的ppyolo_tiny模型进行训练,训练前的相关配置,请按需调整,在此不做赘述。

请先修改好模型配置文件,否则模型训练会报错

%cd

!python PaddleDetection/tools/train.py

-c PaddleDetection/configs/ppyolo/ppyolo_tiny_650e_coco.yml

--vdl_log_dir ~/log_crowdhuman/ppyolo_voc

--use_vdl True

模型导出

!python PaddleDetection/tools/export_model.py -c PaddleDetection/configs/ppyolo/ppyolo_tiny_650e_coco.yml -o weights=output/ppyolo_tiny_650e_coco/65.pdparams

%cd PaddleDetection/

模型预测

result=!python ./deploy/python/infer.py --model_dir=../output_inference/ppyolo_tiny_650e_coco --image_file=../1024.jpg

PS:

本项目先对原料瓶进行了目标检测,这样做有什么好处呢?

答:OCR文字识别会对所有的文字信息进行提取,在实际生活中,具有文字信息的东西有很多,如果直接运用OCR进行文字识别,很有可能会识别到其他无用的文字信息,这是不利于我们的任务的,因而我们在OCR识别前增加了对原料瓶的目标检测步骤,意图是使机器最终提取的是我们需要的原料编号信息。

四、切分图像

提取识别图像的坐标方便切分

import numpy as np

index1='class_id'

index2='right_bottom'

for dt in result:

if index1 in dt:

temp=dt

break

print(temp)

b = temp.split(',')

x1=int(float(b[2][11:]))

y1=int(float(b[3][:-1]))

x2=int(float(b[4][14:]))

y2=int(float(b[5][:-1]))

读取图像

img =np.array(cv2.imread('/home/aistudio/1024.jpg'))

print(img.shape)

切分时适当扩大范围保证图像完整

img2 = img[y1-20:y2+20,x1-20:x2+20]

plt.imshow(img2)

五、使用paddlehub的ocr模型对切分图片进行快速推理

安装paddlehub以及相关库

!pip install --upgrade paddlepaddle -i https://mirror.baidu.com/pypi/simple

!pip install --upgrade paddlehub -i https://mirror.baidu.com/pypi/simple

!pip install shapely -i https://pypi.tuna.tsinghua.edu.cn/simple

!pip install pyclipper -i https://pypi.tuna.tsinghua.edu.cn/simple

导入相关库

import paddlehub as hub

import cv2

import numpy as np

import matplotlib.pyplot as plt # plt 用于显示图片

import matplotlib.image as mpimg # mpimg 用于读取图片

import numpy as np

ocr = hub.Module(name="chinese_ocr_db_crnn_server")

加载模型

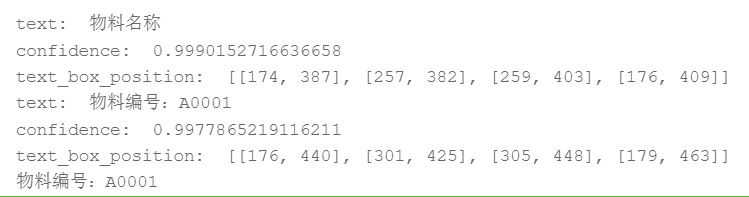

将切分图像送入ocr模型

np_images =[img2]

使用paddlehub的ocr模型进行预测推理

results = ocr.recognize_text(

images=np_images, # 图片数据,ndarray.shape 为 [H, W, C],BGR格式;

use_gpu=False, # 是否使用 GPU;若使用GPU,请先设置CUDA_VISIBLE_DEVICES环境变量

output_dir='ocr_result', # 图片的保存路径,默认设为 ocr_result;

visualization=True, # 是否将识别结果保存为图片文件;

box_thresh=0.5, # 检测文本框置信度的阈值;

text_thresh=0.5) # 识别中文文本置信度的阈值;

for result in results:

data = result['data']

save_path = result['save_path']

for infomation in data:

print('text: ', infomation['text'], '\nconfidence: ', infomation['confidence'], '\ntext_box_position: ', infomation['text_box_position'])

if '物料编号' in infomation['text']:

code = infomation['text']

print(code)

break

获取编号索引

code2 = code[-5:]

print(code2)

根据编号查询数据库

f=open('/home/aistudio/{}.txt'.format(code2), encoding='utf-8')

for line in f:

print(line)

六、Jeston Nano 部署

(1)硬件

- Jeston nano

- Windows 10电脑

- SCI摄像头

(2)软件环境

Jeston nano

- Ubuntu18.04

- jetpack4.4

- Python3.6.9

- PaddlePaddle-gpu-2.2

Windows

- Python3.7

- PaddlePaddle-gpu-2.2

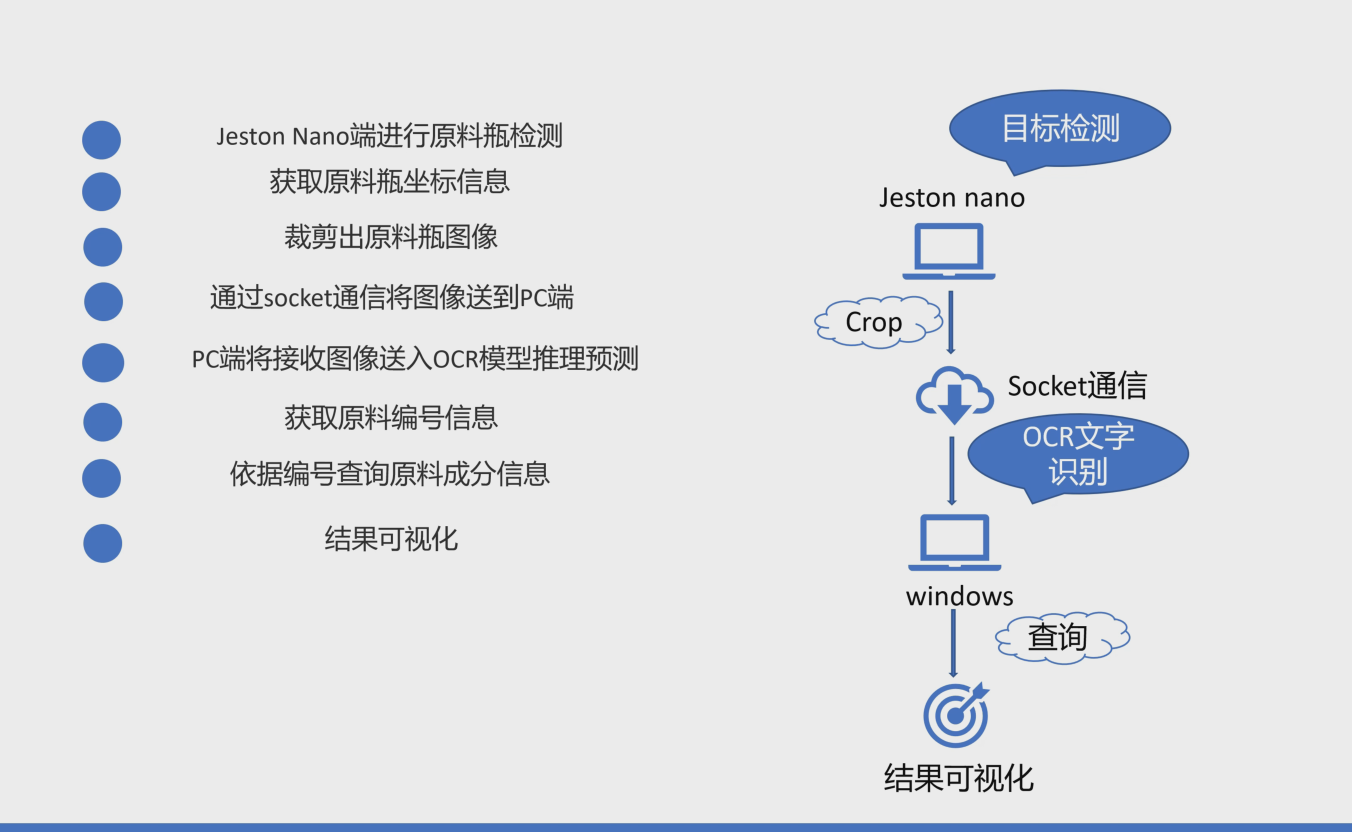

七、部署流程和原理剖析

部署流程

原理剖析

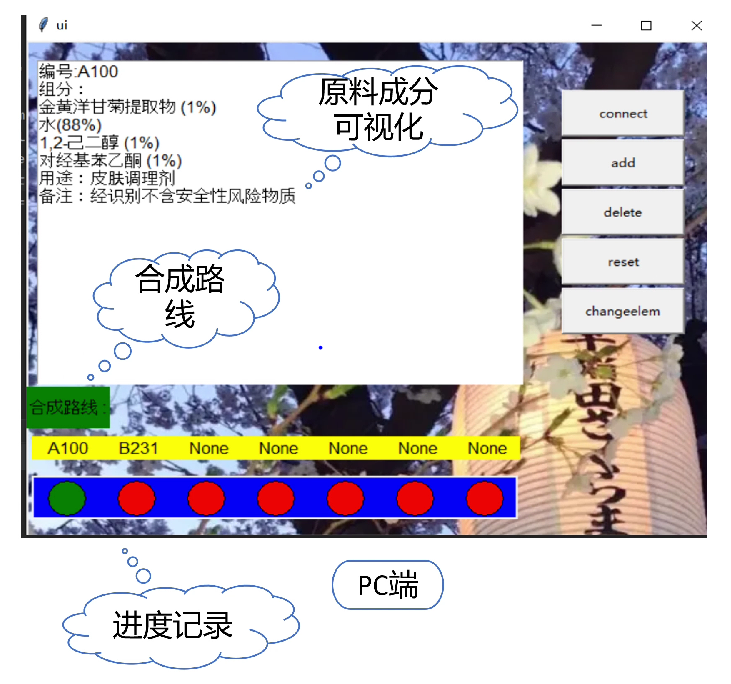

- 主体思路是通过Nano端进行原料瓶目标检测,在获取了原料瓶的坐标信息后对原图像进行裁剪,提取出原料瓶图像,之后通过Socket通信建立nano端和PC端的联系,PC端获取原料瓶图像信息后基于OCR文字识别模型对编码信息进行提取,并查询相应的原料成分信息进行可视化。

八、核心文件及部分代码展示

(1)Jeston nano初始配置

参考资料:

Jetson Nano的入门

Jetson Nano是Nvidia推出的低配版GPU运算平台,可以用来入门深度学习模型的部署,上手起来也是非常简单。

系统安装

系统安装过程分为3步:

1.下载必要的软件及镜像

- Jetson Nano Developer Kit SD卡映像

- Windows版SD存储卡格式化程序

- 镜像烧录工具balenaEtcher

2.格式化SD卡并写入镜像

3.连接电源并启动

开机后,如果能够成功进入上面的显示界面,那么恭喜你,你已成功安装。

如果你在安装过程中遇到了问题,或者是想深入配置(风扇,wifi,,换源,远程桌面等),那么可以看看下面这几篇文章:

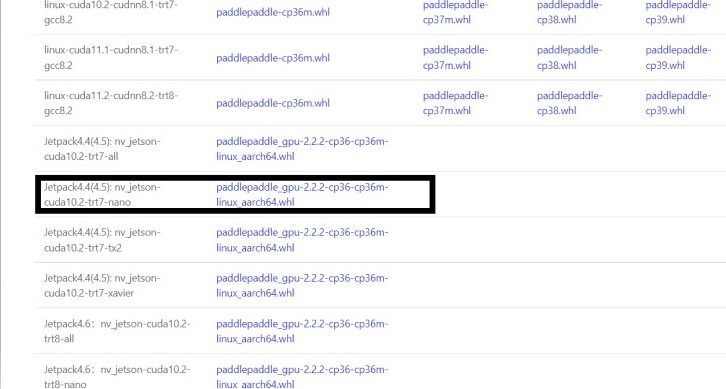

Paddlepaddle安装

下载对应Nano版本的paddlepaddle

注意 建议不要安装最新的PaddlePaddle 最新版本或许会出现不兼容的情况

最后在Nano端安装下载好的whl包即可完成安装

(2)Jeston nano端部署部分

详情请查看nano文件

(3)PC端部署部分

九、总结与改进

- 本项目基于检测和ocr实现了对原料信息的快速查询,但目前模型的精度仍然有待提高,数据集准备仍不够充足,需要进一步的完善,后续将扩充原料瓶类别,以保证更好的满足实际需求。

- 除了查询功能外,本项目也可基于对原料的识别,对实验过程进行一个实时的记录与指导(可设置展示实验的合成路线,对实验的用料进行识别,当用户在实验过程中出现使用错误原料的情况,机器可实时提醒,当用户忘记原料信息时,机器也可实时查询。)